Practice Test #6

Simula la experiencia real del examen con 50 preguntas y un límite de tiempo de 100 minutos. Practica con respuestas verificadas por IA y explicaciones detalladas.

Impulsado por IA

Respuestas y explicaciones verificadas por triple IA

Cada respuesta es verificada de forma cruzada por 3 modelos de IA líderes para garantizar la máxima precisión. Obtén explicaciones detalladas por opción y análisis profundo de cada pregunta.

Preguntas de práctica

You sign up for Azure Active Directory (Azure AD) Premium P2. You need to add a user named [email protected] as an administrator on all the computers that will be joined to the Azure AD domain. What should you configure in Azure AD?

You need to implement a backup solution for App1 after the application is moved. What should you create first?

You need to move the blueprint files to Azure. What should you do?

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution. After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen. You have an Azure virtual machine named VM1. VM1 was deployed by using a custom Azure Resource Manager template named ARM1.json. You receive a notification that VM1 will be affected by maintenance. You need to move VM1 to a different host immediately. Solution: From the Redeploy blade, you click Redeploy. Does this meet the goal?

You plan to use the Azure Import/Export service to copy files to a storage account. Which two files should you create before you prepare the drives for the import job? Each correct answer presents part of the solution. NOTE: Each correct selection is worth one point.

¿Quieres practicar todas las preguntas en cualquier lugar?

Descarga Cloud Pass — incluye exámenes de práctica, seguimiento de progreso y más.

HOTSPOT - You have an Azure Storage account named storage1 that uses Azure Blob storage and Azure File storage. You need to use AzCopy to copy data to the blob storage and file storage in storage1. Which authentication method should you use for each type of storage? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point. Hot Area:

Blob storage: ______

File storage: ______

HOTSPOT - You have an Azure subscription that contains a virtual machine scale set. The scale set contains four instances that have the following configurations: ✑ Operating system: Windows Server 2016 ✑ Size: Standard_D1_v2 You run the get-azvmss cmdlet as shown in the following exhibit:

PS Azure:/> (Get-AzVmss -Name WebProd -ResourceGroupName RG1).VirtualMachineProfile.OsProfile.WindowsConfiguration

ProvisionVMAgent : True

EnableAutomaticUpdates : False

TimeZone :

AdditionalUnattendContent :

WinRM :

Azure:/

PS Azure:/> Get-AzVmss -Name WebProd -ResourceGroupName RG1 | Select -ExpandProperty UpgradePolicy

Mode RollingUpgradePolicy AutomaticOSUpgradePolicy

---- -------------------- ------------------------

Automatic Microsoft.Azure.Management.Compute.Models.AutomaticOSUpgradePolicy

Azure:/

PS Azure:/> []

Use the drop-down menus to select the answer choice that completes each statement based on the information presented in the graphic. NOTE: Each correct selection is worth one point. Hot Area:

When an administrator changes the virtual machine size, the size will be changed on up to ______ virtual machines simultaneously.

When a new build of the Windows Server 2016 image is released, the new build will be deployed to up to ______ virtual machines simultaneously.

HOTSPOT - You have an Azure Kubernetes Service (AKS) cluster named AKS1 and a computer named Computer1 that runs Windows 10. Computer1 that has the Azure CLI installed. You need to install the kubectl client on Computer1. Which command should you run? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point. Hot Area:

______ Install-cli.

Install-cli ______

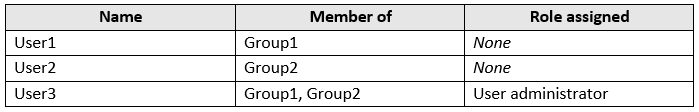

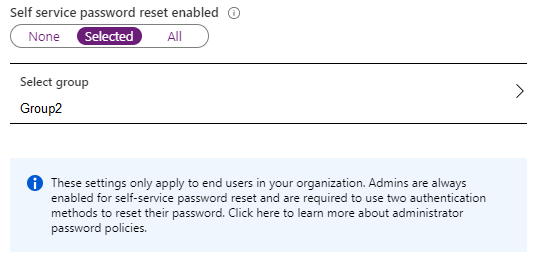

HOTSPOT - You have an Azure Active Directory (Azure AD) tenant named contoso.onmicrosoft.com that contains the users shown in the following table.

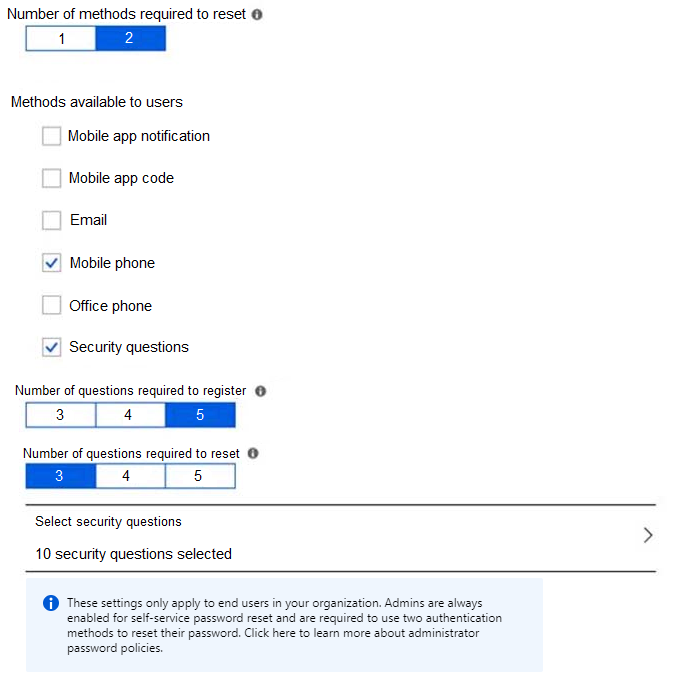

You enable password reset for contoso.onmicrosoft.com as shown in the Password Reset exhibit. (Click the Password Reset tab.)

You configure the authentication methods for password reset as shown in the Authentication Methods exhibit. (Click the Authentication Methods tab.)

For each of the following statements, select Yes if the statement is true. Otherwise, select No. NOTE: Each correct selection is worth one point. Hot Area:

Select the correct answer(s) in the image below.

After User2 answers three security questions correctly, he can reset his password immediately.

If User1 forgets her password, she can reset the password by using the mobile phone app.

User3 can add security questions to the password reset process.

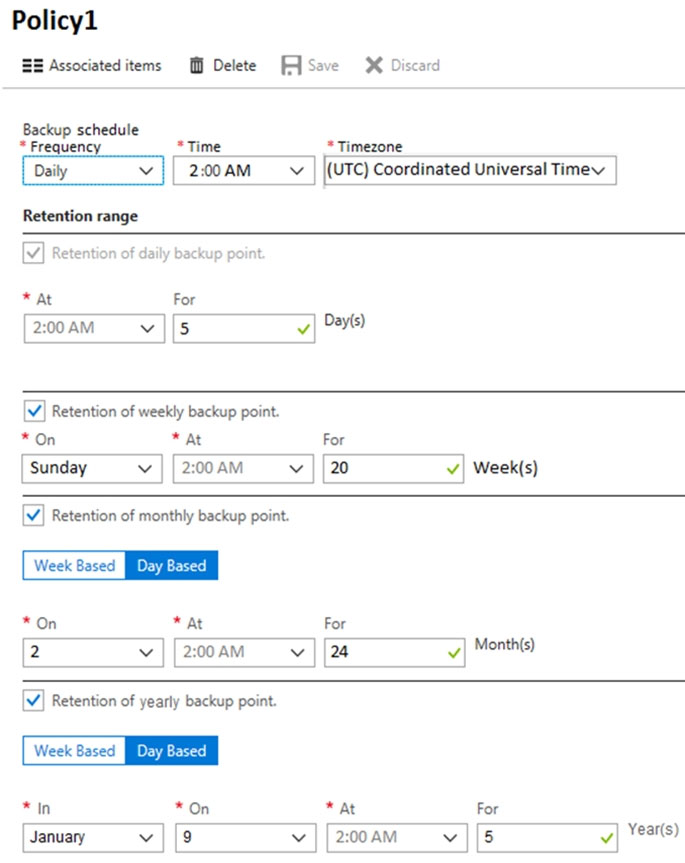

HOTSPOT - You have an Azure virtual machine named VM1 and a Recovery Services vault named Vault1. You create a backup policy named Policy1 as shown in the exhibit. (Click the Exhibit tab.)

You configure the backup of VM1 to use Policy1 on Thursday, January 1 at 1:00 AM. You need to identify the number of available recovery points for VM1. How many recovery points are available on January 8 and January 15? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point. Hot Area:

Select the correct answer(s) in the image below.

January 8 at 2:00 PM (14:00): ______

January 15 at 2:00 PM (14:00): ______

Otros exámenes de práctica

Practice Test #1

Practice Test #2

Practice Test #3

Practice Test #4

Practice Test #5

Practice Test #7

Practice Test #8

Practice Test #9

Obtén la app