Practice Test #2

Simule a experiência real do exame com 50 questões e limite de tempo de 100 minutos. Pratique com respostas verificadas por IA e explicações detalhadas.

Powered by IA

Respostas e Explicações Verificadas por 3 IAs

Cada resposta é verificada por 3 modelos de IA líderes para garantir máxima precisão. Receba explicações detalhadas por alternativa e análise aprofundada das questões.

Questões de Prática

HOTSPOT - You have an Azure subscription named Sub1. You create a virtual network that contains one subnet. On the subnet, you provision the virtual machines shown in the following table. Name Network interface Application security group assignment IP address VM1 NIC1 AppGroup12 10.0.0.10 VM2 NIC2 AppGroup12 10.0.0.11 VM3 NIC3 AppGroup3 10.0.0.100 VM4 NIC4 AppGroup4 10.0.0.200 Currently, you have not provisioned any network security groups (NSGs). You need to implement network security to meet the following requirements: ✑ Allow traffic to VM4 from VM3 only. ✑ Allow traffic from the Internet to VM1 and VM2 only. ✑ Minimize the number of NSGs and network security rules. How many NSGs and network security rules should you create? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point. Hot Area:

NSGs: ______

Network security rules: ______

Your company has an Azure subscription named Sub1 that is associated to an Azure Active Directory (Azure AD) tenant named contoso.com. The company develops an application named App1. App1 is registered in Azure AD. You need to ensure that App1 can access secrets in Azure Key Vault on behalf of the application users. What should you configure?

You have an Azure subscription that contains a virtual machine named VM1. You create an Azure key vault that has the following configurations: ✑ Name: Vault5 ✑ Region: West US ✑ Resource group: RG1 You need to use Vault5 to enable Azure Disk Encryption on VM1. The solution must support backing up VM1 by using Azure Backup. Which key vault settings should you configure?

HOTSPOT - You have an Azure subscription. The subscription contains Azure virtual machines that run Windows Server 2016. You need to implement a policy to ensure that each virtual machine has a custom antimalware virtual machine extension installed. How should you complete the policy? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point. Hot Area:

"effect": "______"

"parameters": { "______": {

HOTSPOT - You are evaluating the effect of the application security groups on the network communication between the virtual machines in Sub2. For each of the following statements, select Yes if the statement is true. Otherwise, select No. NOTE: Each correct selection is worth one point. Hot Area:

From VM1, you can successfully ping the private IP address of VM4.

From VM2, you can successfully ping the private IP address of VM4.

From VM1, you can connect to the web server on VM4.

Quer praticar todas as questões em qualquer lugar?

Baixe o Cloud Pass — inclui simulados, acompanhamento de progresso e mais.

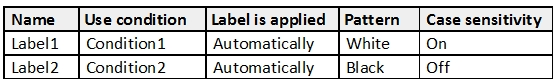

HOTSPOT - You have the Azure Information Protection labels as shown in the following table.

You have the Azure Information Protection policies as shown in the following table.

| Name | Applies to | Use label | Set the default label |

|---|---|---|---|

| Global | Not applicable | None | None |

| Policy1 | User1 | Label1 | None |

| Policy2 | User1 | Label2 | None |

| You need to identify how Azure Information Protection will label files. | |||

| What should you identify? To answer, select the appropriate options in the answer area. | |||

| NOTE: Each correct selection is worth one point. | |||

| Hot Area: |

If User1 creates a Microsoft Word file that includes the text “Black and White”, the file will be assigned: ______

If User1 creates a Microsoft Notepad file that includes the text “Black or white”, the file will be assigned: ______

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution. After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen. You have a hybrid configuration of Azure Active Directory (Azure AD). You have an Azure HDInsight cluster on a virtual network. You plan to allow users to authenticate to the cluster by using their on-premises Active Directory credentials. You need to configure the environment to support the planned authentication. Solution: You deploy an Azure AD Application Proxy. Does this meet the goal?

You onboard Azure Sentinel. You connect Azure Sentinel to Azure Security Center. You need to automate the mitigation of incidents in Azure Sentinel. The solution must minimize administrative effort. What should you create?

HOTSPOT - You are evaluating the security of the network communication between the virtual machines in Sub2. For each of the following statements, select Yes if the statement is true. Otherwise, select No. NOTE: Each correct selection is worth one point. Hot Area:

From VM1, you can successfully ping the public IP address of VM2.

From VM1, you can successfully ping the private IP address of VM3.

From VM1, you can successfully ping the private IP address of VM5.

You have 10 virtual machines on a single subnet that has a single network security group (NSG). You need to log the network traffic to an Azure Storage account. What should you do?

Baixe o app