GCP

Google Professional Cloud Developer

353+ Übungsfragen mit KI-verifizierten Antworten

KI-gestützt

Dreifach KI-verifizierte Antworten & Erklärungen

Jede Google Professional Cloud Developer-Antwort wird von 3 führenden KI-Modellen kreuzverifiziert, um maximale Genauigkeit zu gewährleisten. Erhalte detaillierte Erklärungen zu jeder Option und tiefgehende Fragenanalysen.

Prüfungsbereiche

Übungsfragen

Company overview: SkyLend is a peer‑to‑peer micro‑lending platform expanding from one country to global regions (us-central1, europe-west1, asia-southeast1) and targeting a 10x increase in concurrent users (from 5,000 to 50,000) while reducing operational overhead; technical requirement: the public /loan and /payment APIs must meet 99.9% monthly availability and 95th percentile latency under 250 ms per region, teams must define SLIs and SLOs, track error budgets, visualize SLO health, and receive burn‑rate alerts (2x over 1 hour and 5x over 5 minutes) without building custom tooling; compliance and exec reporting require auditable SLO dashboards across services and regions. Question: Which Google Cloud product best meets SkyLend’s need to define, monitor, and alert on service level indicators and objectives (including error budgets and burn‑rate alerts) across regions?

Your IoT analytics company runs a multi-tenant data pipeline on a Google Kubernetes Engine (GKE) Autopilot cluster in us-central1 for 120 production customers, and you promote releases with Cloud Deploy; during a 2-week refactor of a telemetry aggregator service (container image <250 MB), developers will edit code every 5–10 minutes on laptops with 8 GB RAM and limited CPU and must validate changes locally before pushing to the remote repository. Requirements: • Automatically rebuild and redeploy on local code changes (hot-reload loop ≤ 10 seconds). • Local Kubernetes deployment should closely emulate the production GKE manifests and deployment flow. • Use minimal local resources and avoid requiring a remote container registry for inner-loop builds. Which tools should you choose to build and run the container locally on a developer laptop while meeting these constraints?

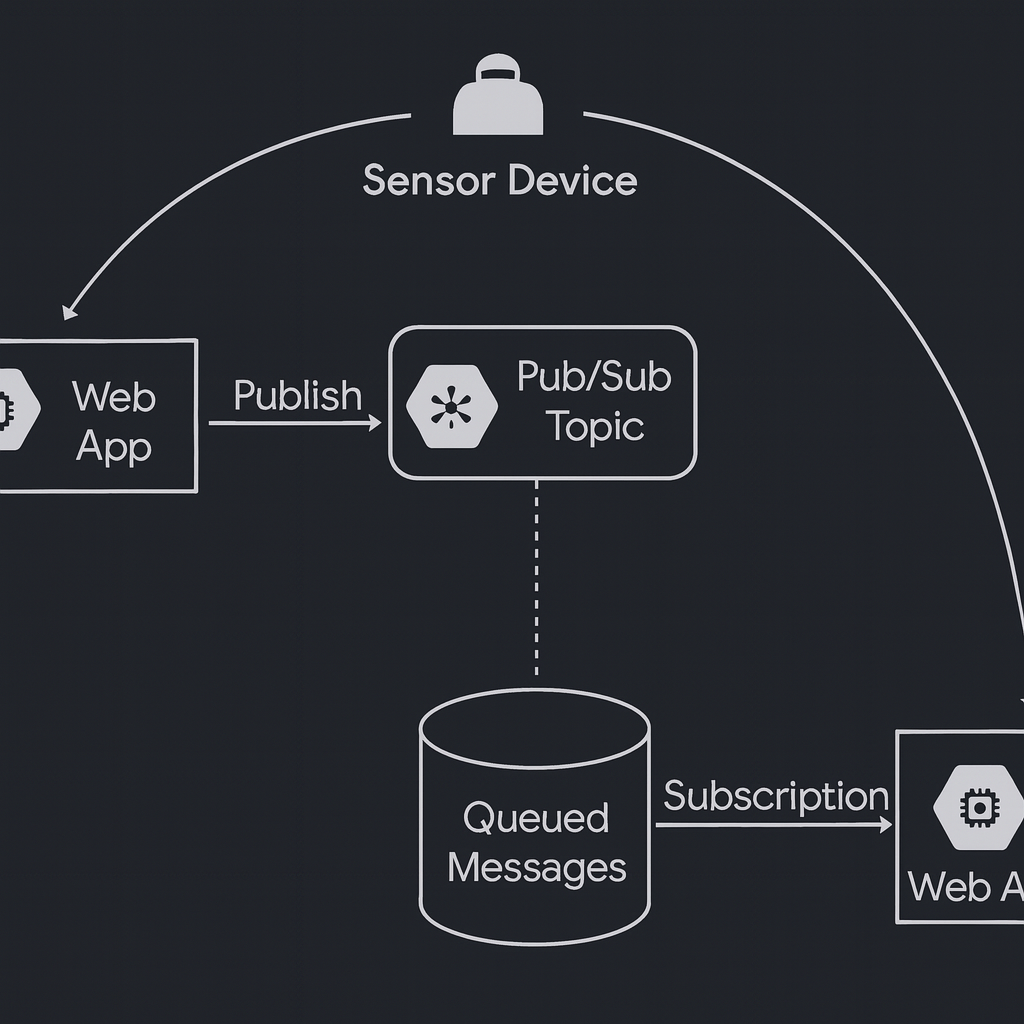

Your IoT solution in Google Cloud collects data from thousands of sensor devices. Each device must publish messages to its own Pub/Sub topic, and consume messages from its own subscription. You need to ensure that each device can only publish and subscribe to its own Pub/Sub resources, while preventing access to others. What should you do?

You are designing a tablet app for municipal tree inspectors that must store hierarchical observations (city -> district -> park -> tree -> inspection) with up to 5 nested levels and support offline work for up to 72 hours; upon reconnect, the app must automatically sync local changes and handle conflicts gracefully. A backend on Cloud Run will use a dedicated service account to enrich the same records (e.g., geocoding, policy tags) directly in the database, performing up to 5,000 writes per minute at peak. The solution must scale securely to 250,000 monthly active users in the first quarter and provide client SDKs with built-in offline caching and synchronization. Which database and IAM role should you assign to the backend service account?

Your mobile health platform currently stores per-user workout telemetry and personalized settings in a single PostgreSQL instance; records vary widely by user and evolve frequently as new device firmware adds fields (e.g., heart-rate variability, sleep stages), resulting in weekly schema migrations, downtime risks, and high operational overhead; you expect up to 8 million users, peak 45,000 writes/second concentrated by userId, simple key-based reads per user, and only per-user transactional consistency is required, not multi-user joins or complex cross-entity transactions. To simplify development and scaling while accommodating highly user-specific, evolving state without rigid schemas, which Google Cloud storage option should you choose?

Möchtest du alle Fragen unterwegs üben?

Lade Cloud Pass herunter – mit Übungstests, Fortschrittsverfolgung und mehr.

During a disaster recovery drill, your workload fails over to a standby Google Cloud project in europe-west1. Within 20 seconds, a newly created and previously idle Cloud Storage bucket begins handling approximately 2100 object write requests per second and 7800 object read requests per second. As the surge continues, services calling the Cloud Storage JSON API start receiving intermittent HTTP 429 and occasional 5xx errors. You need to reduce failed responses while preserving as much throughput as possible. What should you do?

You are deploying a Python microservice to a Linux-based GKE Autopilot cluster in us-central1 that must connect to a Cloud SQL for PostgreSQL instance (instance connection name: myproj:us-central1:orders-db) via a Cloud SQL Auth Proxy sidecar, and you have already created a dedicated service account (my-app-sa) and want to follow Google-recommended practices and the principle of least privilege; what should you do next?

GlobeGigs, a global freelance marketplace, runs a MySQL primary in us-central1 with read replicas in europe-west1 and asia-southeast1 and routes reads to the nearest replica; users in Singapore and Frankfurt still see p99 write latencies over 800 ms because writes go to the primary, and the team targets sub-200 ms p95 for both reads and writes with minimal operational overhead and without introducing eventual consistency for critical transactions. What should they do to further reduce latency for all database interactions with the least amount of effort?

Your company operates a ride-hailing dispatch platform that maintains a persistent cache of all edge worker VMs used for geo-routing; only instances that are in the RUNNING state and have the label fleet=edge-worker should be eligible for job assignment (exclude STOPPED, SUSPENDED, or DELETED instances). The dispatcher reads this cache every 10 seconds and must reflect lifecycle changes (start/stop/suspend/preempt/delete) within 5 seconds across a fleet of 500+ instances to avoid misrouting. You need to design how the application keeps this available-VM cache from becoming noncurrent while minimizing operational overhead. What should you do?

You are designing a real-time processing pipeline for a city's bike-sharing program: each bike sends a GPS/status event to a Pub/Sub topic at an average rate of 5,000 messages per minute with spikes up to 50,000; each message must be processed within 300 ms to update a live availability dashboard, the processing component must have no dependency on any other system or managed infrastructure (no VMs or clusters), and you must incur costs only when new messages arrive; how should you configure the architecture?

Your team uses Cloud Build to run CI for a Go microservice stored in a private GitHub repository mirrored to Cloud Source Repositories; one of the build steps requires a specific static analysis tool (version 3.7.2) that is not present in the default Cloud Build environment, the tool is ~120 MB and must be available within 5 seconds at step start to keep total build time under a 10-minute SLA, outbound internet access during builds is restricted, and you need reproducible results across ~50 builds per day—what should you do?

Your fintech company runs payment microservices on GKE in project retail-prod-001 and must satisfy PCI DSS 10.3; compliance mandates mirroring a duplicate of all application logs matching resource.type="k8s_container" AND labels.app="payments" AND severity>=ERROR from retail-prod-001 to a separate project audit-logs-123 that is restricted to the audit team, without altering existing retention or disrupting current log flows, and without building custom batch jobs; delivery must be near real time (<60 seconds) and the audit project must control retention at 365 days. What should you do?

Your retail analytics team has an IoT gateway that uploads a 5 MB CSV summary every 10 minutes to a Cloud Storage bucket gs://retail-iot-summaries-prod. Upon each successful upload, you must notify a downstream pipeline via the Pub/Sub topic projects/acme/topics/iot-summaries so a Dataflow job can start. You want a solution that requires the least development and operational effort, introduces no additional compute to manage, and can be set up within 1 hour; what should you do?

You are building a real-time leaderboard microservice for a gaming platform and plan to run it on Cloud Run in us-central1. Your source code is stored in a Cloud Source Repository named game-leaderboard, and new releases must deploy automatically whenever changes are pushed to the release/v2 branch. You have already created a cloudbuild.yaml that builds and pushes gcr.io/PROJECT_ID/leaderboard:$SHORT_SHA and then runs: gcloud run deploy leaderboard-api --image gcr.io/PROJECT_ID/leaderboard:$SHORT_SHA --region us-central1 --platform managed --allow-unauthenticated. You want the most efficient approach to ensure each commit to release/v2 triggers a deployment with no manual commands. What should you do next?

You are rolling out an internal reporting service on a fleet of e2-standard-4 Compute Engine VMs in us-central1-a using Terraform; one VM restored from a snapshot has been stuck in 'Starting' for 12 minutes and the serial console shows repeated boot attempts—what two investigations should you prioritize to resolve the launch failure? (Choose two.)

Your startup has a containerized Node.js 20 API for shipping rate calculations that must be accessible only to customers located within the European Union; traffic averages about 3,500 requests per minute from 09:00–21:00 CET and drops to near zero overnight; you are migrating this workload to Google Cloud and need uncaught exceptions and stack traces to appear in Error Reporting after the move; you want the lowest operational effort and to avoid paying for idle compute when there is no traffic. What should you do?

Your manufacturing analytics batch runs every 10 minutes from a containerized job on GKE that invokes the bq CLI in batch mode to execute a SQL file against the BigQuery dataset iot_agg in project alpha-logs, piping the CLI output to a downstream formatter process; the workload uses the node-attached service account gke-batch@factory-ops.iam.gserviceaccount.com, and the bq CLI intermittently fails with a permission error indicating the job cannot be created when the query runs. You need to resolve the permission issue without changing the query logic or the piping behavior of the CLI output. What should you do?

You are building an external review portal for a film festival that stores high‑bitrate video dailies in Cloud Storage, and you must let reviewers—some of whom do not have Google accounts—securely access only their assigned files with the ability to read, upload replacements, or delete them for a strict 24-hour window; how should you provide access to the objects?

Your startup manages 3,000 smart vending machines that publish 4 KB JSON telemetry to a Pub/Sub topic at an average rate of 600 messages per second (peaks up to 1,200). You must parse each message and persist it for analytics with end-to-end latency under 45 seconds, and each message must be processed exactly once to avoid double-counting transactions. You want the cheapest and simplest fully managed approach with minimal operations overhead and cannot maintain clusters or build custom deduplication workflows. What should you do?

Your CI pipeline runs 200 unit tests per commit for a fleet-management analytics service that consumes messages from a Cloud Pub/Sub topic named 'telemetry' using ordering keys set to vehicle_id; for each test you need to publish a sequence of 50 messages for a single vehicle_id and assert that your subscriber processes them strictly in order within the key, while keeping the tests reliable, fast, and at zero additional GCP cost; what should you do?

Erfolgsgeschichten(6)

Lernzeitraum: 2 months

The scenarios in this app were extremely useful. The explanations made even the tricky deployment questions easy to understand. Definitely worth using.

Lernzeitraum: 2 months

The questions weren’t just easy recalls — they taught me how to approach real developer scenarios. I passed this week thanks to these practice sets.

Lernzeitraum: 1 month

1개월 구독하니 빠르게 풀어야 한다는 강박이 생기면서 더 열심히 공부하게 됐던거 같아요. 다행히 문제들이 비슷해서 쉽게 풀 수 있었네요

Lernzeitraum: 1 month

이 앱의 문제들과 실제 시험 문제들이 많이 비슷해서 쉽게 풀었어요! 첫 시험만에 합격하니 좋네요 굿굿

Lernzeitraum: 1 month

I prepared for three weeks using Cloud Pass and the improvement was huge. The difficulty level was close to the real Cloud Developer exam, and the explanations helped me fill in my knowledge gaps quickly.

Weitere GCP-Zertifizierungen

Google Professional Cloud DevOps Engineer

Professional

Google Associate Cloud Engineer

Associate

Google Professional Cloud Network Engineer

Professional

Google Associate Data Practitioner

Associate

Google Cloud Digital Leader

Foundational

Google Professional Cloud Security Engineer

Professional

Google Professional Cloud Architect

Professional

Google Professional Cloud Database Engineer

Professional

Google Professional Data Engineer

Professional

Google Professional Machine Learning Engineer

Professional

App holen