Practice Test #3

Simulez l'expérience réelle de l'examen avec 50 questions et une limite de temps de 100 minutes. Entraînez-vous avec des réponses vérifiées par IA et des explications détaillées.

Propulsé par l'IA

Réponses et explications vérifiées par triple IA

Chaque réponse est vérifiée par 3 modèles d'IA de pointe pour garantir une précision maximale. Obtenez des explications détaillées par option et une analyse approfondie des questions.

Questions d'entraînement

You plan to use Azure Resource Manager templates to perform multiple deployments of identically configured Azure virtual machines. The password for the administrator account of each deployment is stored as a secret in different Azure key vaults. You need to identify a method to dynamically construct a resource ID that will designate the key vault containing the appropriate secret during each deployment. The name of the key vault and the name of the secret will be provided as inline parameters. What should you use to construct the resource ID?

HOTSPOT - You have two Azure virtual machines in the East US 2 region as shown in the following table. Name Operating system Type Tier VM1 Windows Server 2008 R2 A3 Basic VM2 Ubuntu 16.04-DAILY-LTS L4s Standard You deploy and configure an Azure Key vault. You need to ensure that you can enable Azure Disk Encryption on VM1 and VM2. What should you modify on each virtual machine? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point. Hot Area:

VM1 ______

VM2 ______

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution. After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen. You have an Azure subscription. The subscription contains 50 virtual machines that run Windows Server 2012 R2 or Windows Server 2016. You need to deploy Microsoft Antimalware to the virtual machines. Solution: You add an extension to each virtual machine. Does this meet the goal?

You are configuring an Azure Kubernetes Service (AKS) cluster that will connect to an Azure Container Registry. You need to use the auto-generated service principal to authenticate to the Azure Container Registry. What should you create?

You have an Azure subscription named Subscription1. You deploy a Linux virtual machine named VM1 to Subscription1. You need to monitor the metrics and the logs of VM1. What should you use?

Envie de vous entraîner partout ?

Téléchargez Cloud Pass — inclut des tests d'entraînement, le suivi de progression et plus encore.

You have an Azure resource group that contains 100 virtual machines. You have an initiative named Initiative1 that contains multiple policy definitions. Initiative1 is assigned to the resource group. You need to identify which resources do NOT match the policy definitions. What should you do?

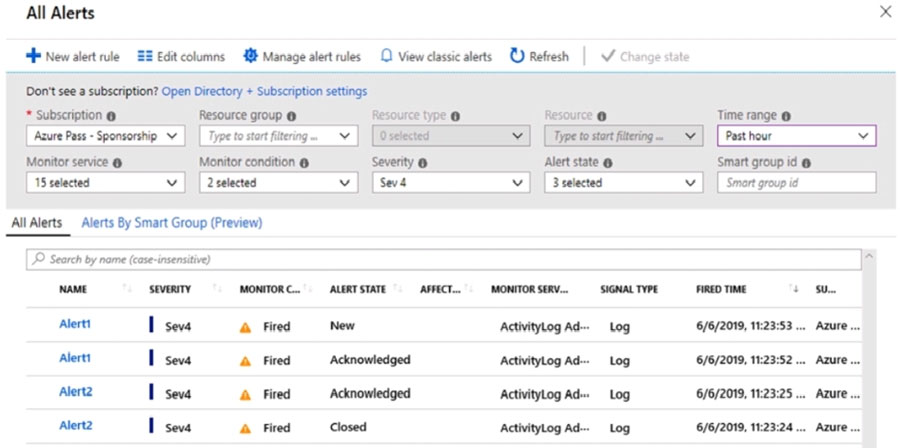

HOTSPOT - You have an Azure subscription that contains the alerts shown in the following exhibit.

Use the drop-down menus to select the answer choice that completes each statement based on the information presented in the graphic. NOTE: Each correct selection is worth one point. Hot Area:

Select the correct answer(s) in the image below.

The state of Alert1 that was fired at 11:23:52 ______

The state of Alert2 that was fired at 11:23:24 ______

You have the Azure virtual machines shown in the following table.

For which virtual machines can you enable Update Management?

VM1 is running.

VM2 is running.

VM3 is stopped.

VM4 is running.

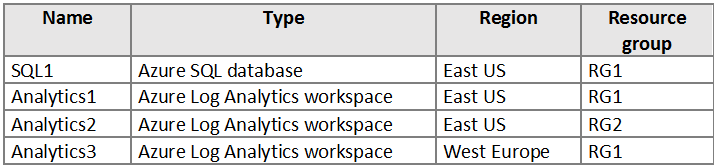

HOTSPOT - You have an Azure subscription that contains the resources shown in the following table.

You create the Azure Storage accounts shown in the following table.

You need to configure auditing for SQL1. Which storage accounts and Log Analytics workspaces can you use as the audit log destination? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point. Hot Area:

Name: Storage1, Region: East US, Resource group: RG1, Storage account type: Blob, Access tier (default): Cool

Name: Storage2, Region: East US, Resource group: RG2, Storage account type: General purpose V1, Access tier (default): Not applicable

Name: Storage3, Region: West Europe, Resource group: RG1, Storage account type: General purpose V2, Access tier (default): Hot

Storage accounts that can be used as the audit log destination: ______

Log Analytics workspaces that can be used as the audit log destination: ______

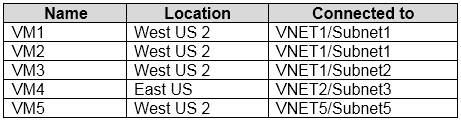

You have the Azure virtual machines shown in the following table.

Each virtual machine has a single network interface. You add the network interface of VM1 to an application security group named ASG1. You need to identify the network interfaces of which virtual machines you can add to ASG1. What should you identify?

Obtenir l'application