Practice Test #8

Simulasikan pengalaman ujian sesungguhnya dengan 50 soal dan batas waktu 100 menit. Berlatih dengan jawaban terverifikasi AI dan penjelasan detail.

Didukung AI

Jawaban & Penjelasan Terverifikasi oleh 3 AI

Setiap jawaban diverifikasi silang oleh 3 model AI terkemuka untuk memastikan akurasi maksimum. Dapatkan penjelasan detail per opsi dan analisis soal mendalam.

Soal Latihan

You create an App Service plan named Plan1 and an Azure web app named webapp1. You discover that the option to create a staging slot is unavailable. You need to create a staging slot for Plan1. What should you do first?

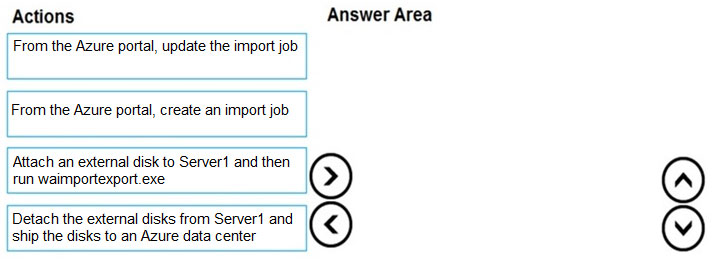

DRAG DROP - You have an Azure subscription that contains a storage account. You have an on-premises server named Server1 that runs Windows Server 2016. Server1 has 2 TB of data. You need to transfer the data to the storage account by using the Azure Import/Export service. In which order should you perform the actions? To answer, move all actions from the list of actions to the answer area and arrange them in the correct order. NOTE: More than one order of answer choices is correct. You will receive credit for any of the correct orders you select. Select and Place:

Select the correct answer(s) in the image below.

You have five Azure virtual machines that run Windows Server 2016. The virtual machines are configured as web servers. You have an Azure load balancer named LB1 that provides load balancing services for the virtual machines. You need to ensure that visitors are serviced by the same web server for each request. What should you configure?

You have an Azure Kubernetes Service (AKS) cluster named AKS1. You need to configure cluster autoscaler for AKS1. Which two tools should you use? Each correct answer presents a complete solution. NOTE: Each correct selection is worth one point.

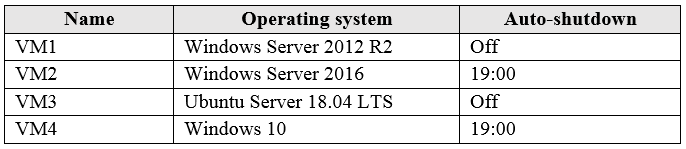

You have an Azure subscription that has a Recovery Services vault named Vault1. The subscription contains the virtual machines shown in the following table:

You plan to schedule backups to occur every night at 23:00. Which virtual machines can you back up by using Azure Backup?

Ingin berlatih semua soal di mana saja?

Unduh Cloud Pass — termasuk tes latihan, pelacakan progres & lainnya.

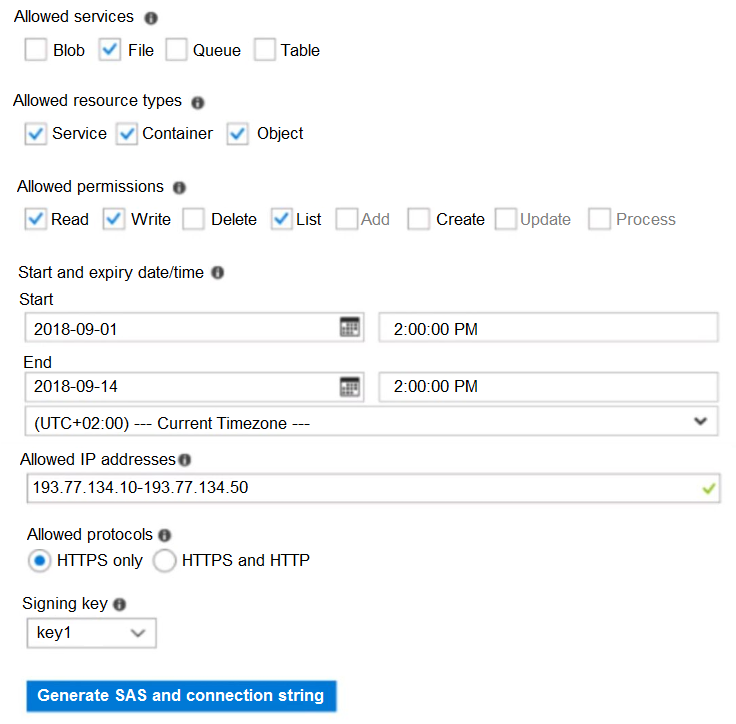

HOTSPOT - You have an Azure subscription named Subscription1. In Subscription1, you create an Azure file share named share1. You create a shared access signature (SAS) named SAS1 as shown in the following exhibit:

To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point. Hot Area:

Select the correct answer(s) in the image below.

If on September 2, 2018, you run Microsoft Azure Storage Explorer on a computer that has an IP address of 193.77.134.1, and you use SAS1 to connect to the storage account, you ______.

If on September 10, 2018, you run the net use command on a computer that has an IP address of 193.77.134.50, and you use SAS1 as the password to connect to share1, you ______.

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution. After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen. You have an Azure virtual machine named VM1 that runs Windows Server 2016. You need to create an alert in Azure when more than two error events are logged to the System event log on VM1 within an hour. Solution: You create an event subscription on VM1. You create an alert in Azure Monitor and specify VM1 as the source Does this meet the goal?

You are planning the move of App1 to Azure. You create a network security group (NSG). You need to recommend a solution to provide users with access to App1. What should you recommend?

You have an Azure DNS zone named adatum.com. You need to delegate a subdomain named research.adatum.com to a different DNS server in Azure. What should you do?

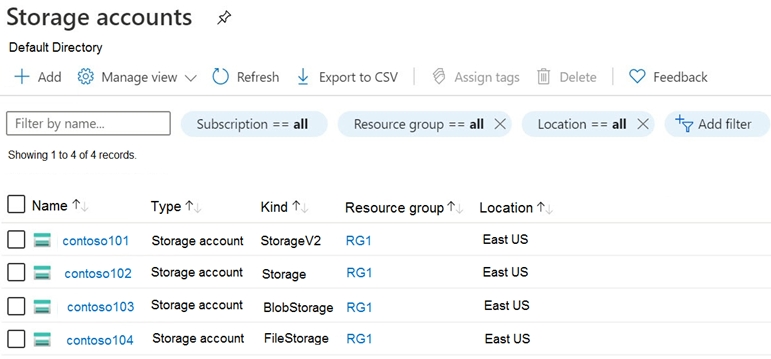

HOTSPOT - You have an Azure subscription that contains the storage accounts shown in the following exhibit.

Use the drop-down menus to select the answer choice that completes each statement based on the information presented in the graphic. NOTE: Each correct selection is worth one point. Hot Area:

Select the correct answer(s) in the image below.

You can create a premium file share in ______

You can use the Archive access tier in ______

Tes Latihan Lainnya

Practice Test #1

Practice Test #2

Practice Test #3

Practice Test #4

Practice Test #5

Practice Test #6

Practice Test #7

Practice Test #9

Dapatkan aplikasi