Practice Test #3

Simule a experiência real do exame com 50 questões e limite de tempo de 100 minutos. Pratique com respostas verificadas por IA e explicações detalhadas.

Powered by IA

Respostas e Explicações Verificadas por 3 IAs

Cada resposta é verificada por 3 modelos de IA líderes para garantir máxima precisão. Receba explicações detalhadas por alternativa e análise aprofundada das questões.

Questões de Prática

You have an Azure subscription that contains a virtual machine named VM1. VM1 hosts a line-of-business application that is available 24 hours a day. VM1 has one network interface and one managed disk. VM1 uses the D4s v3 size. You plan to make the following changes to VM1: ✑ Change the size to D8s v3. ✑ Add a 500-GB managed disk. ✑ Add the Puppet Agent extension. ✑ Enable Desired State Configuration Management. Which change will cause downtime for VM1?

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution. After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen. You have an Azure Directory (Azure AD) tenant named Adatum and an Azure Subscription named Subscription1. Adatum contains a group named Developers. Subscription1 contains a resource group named Dev. You need to provide the Developers group with the ability to create Azure logic apps in the Dev resource group. Solution: On Subscription1, you assign the DevTest Labs User role to the Developers group. Does this meet the goal?

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution. After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen. You have an Azure virtual machine named VM1 that runs Windows Server 2016. You need to create an alert in Azure when more than two error events are logged to the System event log on VM1 within an hour. Solution: You create an Azure Log Analytics workspace and configure the data settings. You install the Microsoft Monitoring Agent on VM1. You create an alert in Azure Monitor and specify the Log Analytics workspace as the source. Does this meet the goal?

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution. After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen. You have an Azure virtual machine named VM1 that runs Windows Server 2016. You need to create an alert in Azure when more than two error events are logged to the System event log on VM1 within an hour. Solution: You create an Azure Log Analytics workspace and configure the data settings. You add the Microsoft Monitoring Agent VM extension to VM1. You create an alert in Azure Monitor and specify the Log Analytics workspace as the source. Does this meet the goal?

HOTSPOT - You plan to deploy five virtual machines to a virtual network subnet. Each virtual machine will have a public IP address and a private IP address. Each virtual machine requires the same inbound and outbound security rules. What is the minimum number of network interfaces and network security groups that you require? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point. Hot Area:

Minimum number of network interfaces: ______

Minimum number of network security groups: ______

Quer praticar todas as questões em qualquer lugar?

Baixe o Cloud Pass — inclui simulados, acompanhamento de progresso e mais.

You have Azure subscription that includes data in following locations: Name Type container1 Blob container share1 Azure files share DB1 SQL database Table1 Azure Table You plan to export data by using Azure import/export job named Export1. You need to identify the data that can be exported by using Export1. Which data should you identify?

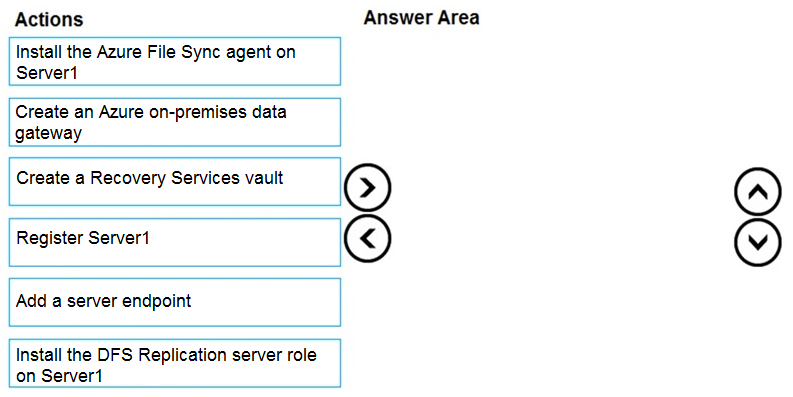

DRAG DROP - You have an on-premises file server named Server1 that runs Windows Server 2016. You have an Azure subscription that contains an Azure file share. You deploy an Azure File Sync Storage Sync Service, and you create a sync group. You need to synchronize files from Server1 to Azure. Which three actions should you perform in sequence? To answer, move the appropriate actions from the list of actions to the answer area and arrange them in the correct order. Select and Place:

Select the correct answer(s) in the image below.

You have an Azure subscription named Subscription1. You have 5 TB of data that you need to transfer to Subscription1. You plan to use an Azure Import/Export job. What can you use as the destination of the imported data?

You have an Azure Resource Manager template named Template1 that is used to deploy an Azure virtual machine. Template1 contains the following text:

"location": {

"type": "String",

"defaultValue": "eastus",

"allowedValues": [

"canadacentral",

"eastus",

"westeurope",

"westus" ]

}

The variables section in Template1 contains the following text: "location": "westeurope" The resources section in Template1 contains the following text:

"type": "Microsoft.Compute/virtualMachines",

"apiVersion": "2018-10-01",

"name": "[variables('vmName')]",

"location": "westeurope",

You need to deploy the virtual machine to the West US location by using Template1. What should you do?

HOTSPOT - You have a hybrid deployment of Azure Active Directory (Azure AD) that contains the users shown in the following table. Name | Type | Source User1 | Member | Azure AD User2 | Member | Windows Server Active Directory User3 | Guest | Microsoft account You need to modify the JobTitle and UsageLocation attributes for the users. For which users can you modify the attributes from Azure AD? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point. Hot Area:

JobTitle: ______

UsageLocation: ______

Outros Simulados

Practice Test #1

Practice Test #2

Practice Test #4

Practice Test #5

Practice Test #6

Practice Test #7

Practice Test #8

Practice Test #9

Baixe o app