Practice Test #2

Simulate the real exam experience with 100 questions and a 120-minute time limit. Practice with AI-verified answers and detailed explanations.

AI-Powered

Triple AI-Verified Answers & Explanations

Every answer is cross-verified by 3 leading AI models to ensure maximum accuracy. Get detailed per-option explanations and in-depth question analysis.

Practice Questions

Which two statements describe the advantages of using a version control system? (Choose two.)

Correct. Branching and merging are fundamental VCS features. Branches allow isolated development for features, fixes, or experiments without impacting the main code line. Merging integrates changes back after validation, often via pull requests and reviews. This supports safer development and structured release workflows, which is especially valuable when managing automation code and network configuration changes.

Incorrect. Automating builds and infrastructure provisioning is typically handled by CI/CD tools (e.g., Jenkins, GitHub Actions, GitLab CI) and automation/provisioning tools (e.g., Ansible, Terraform). A VCS can trigger these tools via webhooks or pipeline integrations, but the automation capability is not provided by the VCS itself; it primarily stores and versions the source artifacts.

Correct. A VCS enables concurrent collaboration by multiple engineers on the same repository. It tracks changes through commits, supports comparing versions (diff), and provides mechanisms to detect and resolve conflicts when changes overlap. This is a key advantage for teams managing shared automation scripts, templates, and configuration files while maintaining traceability and accountability.

Incorrect. Tracking user stories, managing backlogs, and sprint planning are functions of Agile project management/issue tracking tools such as Jira, Azure Boards, or Trello. While these tools often integrate with VCS (linking commits to tickets), they are not inherent features or advantages of a version control system.

Incorrect. Unit testing is enabled by testing frameworks and good development practices (e.g., pytest, unittest, JUnit). A VCS can store test code and track changes to tests over time, but it does not help developers write effective unit tests by itself. Testing and version control are complementary but distinct capabilities.

Question Analysis

Core Concept: This question tests understanding of Version Control Systems (VCS) such as Git, which are foundational tools in software development and network automation. In CCNAAUTO contexts, VCS is used to manage source code (Python/Ansible), templates, and even network configuration files using Infrastructure as Code (IaC) practices. Why the Answer is Correct: A is correct because branching and merging are core VCS capabilities. Branching lets engineers isolate work (new features, bug fixes, experiments) without destabilizing the main line of development (often called main/master). Merging then integrates validated changes back into the main branch, enabling controlled collaboration and release management. C is correct because VCS enables multiple engineers to work on the same files concurrently while tracking changes and handling conflicts. VCS records who changed what and when, supports diff/compare, and provides conflict detection/resolution during merges—critical when teams collaborate on automation scripts or device configuration snippets. Key Features / Best Practices: Key VCS features include commit history (audit trail), diffs, tags/releases, branching strategies (feature branches, GitFlow, trunk-based development), and pull requests/code reviews (often via platforms like GitHub/GitLab/Bitbucket). In network automation, storing configurations and automation code in Git supports repeatability, peer review, and rollback (reverting to a known-good commit). This aligns with DevOps practices and improves change control. Common Misconceptions: B may sound plausible because VCS is often used alongside CI/CD tools, but VCS itself does not automate builds or provisioning; that is the role of CI/CD systems (Jenkins, GitHub Actions, GitLab CI) and IaC/provisioning tools (Terraform, Ansible). D describes Agile project management tooling (Jira/Azure Boards), not VCS. E relates to testing practices; while tests can be stored in VCS, the VCS does not inherently enable writing unit tests. Exam Tips: For exam questions, separate “source control” capabilities (history, collaboration, branching/merging, rollback, auditability) from adjacent ecosystem tools (CI/CD pipelines, issue tracking, testing frameworks). If an option describes workflow automation, backlog management, or test authoring, it is usually not a direct VCS advantage. Focus on collaboration and change tracking as the primary VCS value.

Which two elements are foundational principles of DevOps? (Choose two.)

Correct. DevOps is strongly associated with removing organizational silos by creating cross-functional teams and shared ownership across development and operations (often including security). This improves collaboration, shortens feedback loops, and reduces handoff friction. On exams, “cross-functional teams” and “shared responsibility” are classic DevOps cultural principles.

Incorrect. Microservices can complement DevOps by enabling independent deployments and smaller change sets, but they are an architectural style, not a foundational DevOps principle. DevOps can be implemented with monoliths or microservices. Treat microservices as a possible outcome/choice, not a definition of DevOps.

Incorrect. Containers are a deployment technology that often supports DevOps goals (portability, consistency, faster deployments), but DevOps does not require containers. Many DevOps implementations use VMs, bare metal, or platform services without containers. Exams typically classify containers as tooling, not foundational principles.

Correct. Automation is central to DevOps because it enables repeatable, reliable, and fast delivery (CI/CD, automated testing, IaC). The phrasing “automating over documenting” is a bit absolute, but it reflects the DevOps preference to automate repeatable processes rather than rely on manual runbooks. Documentation still matters, but automation is foundational.

Incorrect. Optimizing infrastructure cost is a valid business goal and may be improved through DevOps/FinOps practices, but it is not a foundational DevOps principle. DevOps primarily focuses on delivery speed, stability, collaboration, and continuous improvement; cost optimization is secondary and context-dependent.

Question Analysis

Core concept: DevOps is a cultural and operational approach that improves the speed, quality, and reliability of delivering software/services by aligning people, process, and technology. Foundational principles commonly emphasized in DevOps literature (e.g., CALMS: Culture, Automation, Lean, Measurement, Sharing) include breaking down silos via cross-functional collaboration and increasing automation to enable repeatable, fast, low-risk delivery. Why the answers are correct: A is correct because DevOps explicitly targets the traditional separation between Development and Operations (and often Security) by forming cross-functional teams and shared ownership. This reduces handoff delays, misaligned incentives, and “throw it over the wall” behaviors. Cross-functional teams enable faster feedback loops, better incident response, and continuous improvement. D is correct because automation is a core DevOps enabler. Automating builds, tests, deployments, and infrastructure provisioning (CI/CD and Infrastructure as Code) reduces human error, increases consistency, and makes frequent releases feasible. While documentation remains important, DevOps prioritizes automating repeatable work so teams can focus on design, reliability, and learning. Key features/best practices: DevOps practices include CI/CD pipelines, automated testing, configuration management, IaC (e.g., Ansible/Terraform), monitoring/observability, and blameless postmortems. Organizationally, it includes shared metrics (lead time, deployment frequency, change failure rate, MTTR) and shared responsibility for uptime and customer outcomes. Common misconceptions: Microservices (B) and containers (C) are popular with DevOps but are not foundational principles; they are architectural/deployment choices that can support DevOps goals but are not required. Cost optimization (E) is a business objective and may be improved by DevOps, but it is not a defining principle. Exam tips: When asked for “foundational principles,” choose culture/organizational alignment and automation/continuous delivery concepts rather than specific technologies (containers) or architectures (microservices). Look for keywords like “breaking silos,” “shared ownership,” “automation,” “continuous integration,” and “continuous delivery.”

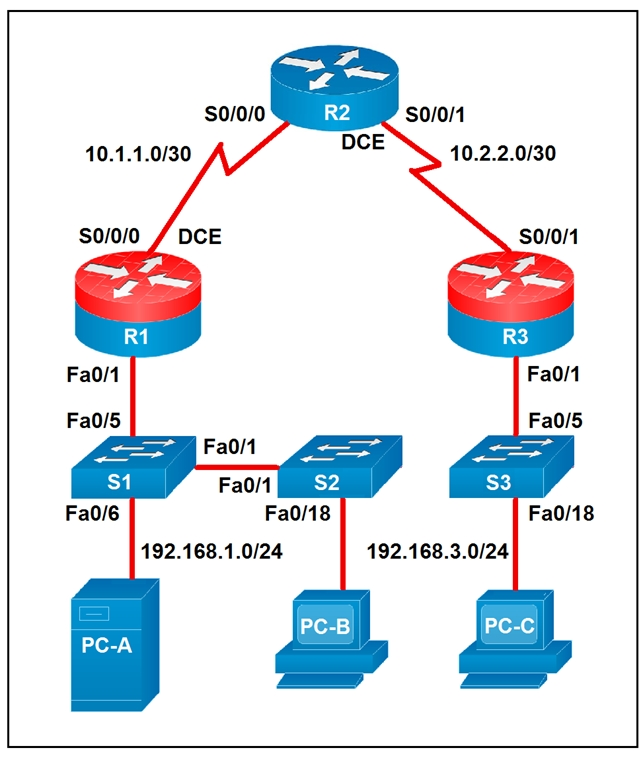

Refer to the exhibit.

Which two statements about the network diagram are true? (Choose two.)

False. PC-B is connected to the LAN labeled 192.168.1.0/24, which means the subnet uses a 24-bit network prefix. A statement claiming 18 bits are dedicated to the network portion would correspond to a /18 mask, not /24. Therefore the diagram contradicts this option directly.

This statement is true because router R2 has two separate serial interfaces shown in the diagram: S0/0/0 toward R1 and S0/0/1 toward R3. Each interface is connected to a different /30 WAN subnet, 10.1.1.0/30 and 10.2.2.0/30 respectively. The topology explicitly labels both serial connections, so at least one router indeed has two connected serial interfaces. In fact, R2 is the router that satisfies this condition.

False. R1 and R3 do not share a common subnet with each other. R1 connects to R2 using 10.1.1.0/30, while R3 connects to R2 using 10.2.2.0/30, and those are distinct Layer 3 networks. Since each router interface belongs to its own labeled /30 subnet, R1 and R3 are not in the same subnet.

This statement is true because both PC-A and PC-B are shown on the 192.168.1.0/24 network. PC-A connects through S1 and PC-B connects through S2, and S1 and S2 are linked together at Layer 2, extending the same LAN segment. Since no router separates those two hosts, they remain in the same IP subnet. Therefore PC-A and PC-B share the same subnet.

False. PC-C is on subnet 192.168.3.0/24, and a /24 contains 256 total IP addresses. However, two of those addresses are reserved for the network and broadcast addresses, leaving only 254 usable host addresses in traditional IPv4 subnetting. So the subnet cannot contain 256 hosts.

Question Analysis

Core concept: Determine subnet membership and interface connectivity from the topology labels and masks shown in the diagram. Why correct: R2 clearly has two active serial links, and PC-A plus PC-B are both attached to the same 192.168.1.0/24 Layer 2 domain through interconnected switches, so those two statements are true. Key features: The WAN links are separate /30 point-to-point subnets, while the left-side LAN is a shared /24 subnet extended across S1 and S2. Common misconceptions: Confusing total addresses with usable hosts in a /24, or assuming routers connected through another router share a subnet. Exam tips: Read the subnet labels carefully, distinguish Layer 2 switch extension from Layer 3 routing boundaries, and remember that a /24 provides 254 usable host addresses in standard IPv4 subnetting.

A developer is reviewing a code that was written by a colleague. It runs fine, but there are many lines of code to do a seemingly simple task repeatedly. Which action organizes the code?

Removing “unnecessary tests” is not a standard approach to organizing code and can reduce reliability. Tests (unit/integration) are typically kept or improved during refactoring to ensure behavior doesn’t change. The problem described is duplicated implementation, not excessive validation logic. Eliminating tests may make the code shorter, but it does not improve structure, reuse, or maintainability and is generally a poor practice.

Reverse engineering and rewriting the logic is excessive and risky, especially since the code “runs fine.” Good refactoring is behavior-preserving and incremental: you improve structure without changing what the program does. A full rewrite increases the chance of introducing defects and is not the typical answer for organizing repeated code. Exam-wise, rewrites are rarely the best first step when a targeted refactor solves the issue.

Extracting repeated code into functions is the classic refactoring technique for duplication. A well-named function encapsulates the repeated steps, accepts parameters for the parts that vary, and returns results consistently. This improves readability, reduces maintenance effort, and supports reuse across scripts and modules—common in network automation where the same API call patterns or parsing logic recur in multiple places.

Loops reduce repetition when the same operation is performed over a list (for example, configuring many devices or interfaces). However, loops alone don’t necessarily “organize” code when the repetition occurs in multiple places or represents a reusable procedure. Often, the best design is a function that performs the task and a loop that calls it for each item. Since the question emphasizes organizing repeated pieces, functions are the more direct answer.

Question Analysis

Core Concept: This question tests code organization and maintainability concepts: identifying duplicated logic (“repeated code”) and applying abstraction to improve readability, reuse, and long-term support. In software development (including network automation scripts), repeated blocks are a classic “code smell” that should be addressed with refactoring. Why the Answer is Correct: Option C is correct because creating functions (or methods) is a primary way to organize repeated logic. When the same sequence of steps appears multiple times, extracting it into a function centralizes that behavior behind a clear name and interface (parameters/return values). This reduces duplication, makes the intent clearer, and ensures that future changes are made in one place rather than many—critical in automation where small changes (API endpoints, payload formats, authentication) can otherwise require editing dozens of lines. Key Features / Best Practices: - DRY principle (Don’t Repeat Yourself): minimize duplication by encapsulating repeated behavior. - Abstraction and modularity: functions provide a reusable unit with inputs/outputs. - Testability: functions are easier to unit test than scattered repeated blocks. - Maintainability: updates (e.g., changing a REST call header or error handling) are applied once. In Cisco automation contexts, this often means wrapping repeated API calls, device connection logic, parsing routines, or configuration templates into functions (or classes/modules) and reusing them across workflows. Common Misconceptions: - Loops (Option D) can reduce repetition only when the repeated code differs mainly by iterating over a list of items. If the repetition is the same “procedure” used in multiple places, functions are the more general organizing tool. Often the best solution is both: a function that performs the task, called inside a loop. - Removing tests (Option A) is not “organizing” code; it risks reducing correctness and is unrelated to duplication. - Reverse engineering and rewriting logic (Option B) is unnecessary and risky when the code already works; refactoring should be incremental and behavior-preserving. Exam Tips: For CCNAAUTO-style questions, map keywords to concepts: “repeated code” strongly points to refactoring via functions/modules (DRY). Choose loops when the question emphasizes iterating over a collection (devices, interfaces, VLANs). Choose functions when the emphasis is organizing repeated procedures and improving maintainability.

Refer to the exhibit.

---

- hosts: switch2960cx

gather_facts: no

tasks:

- ios_l2_interface:

name: GigabitEthernet0/1

state: unconfigured

- ios_l2_interface:

name: GigabitEthernet0/1

mode: trunk

native_vlan: 1

trunk_allowed_vlans: 6-8

state: present

- ios_vlan:

vlan_id: 6

name: guest-vlan

interfaces:

- GigabitEthernet0/2

- GigabitEthernet0/3

- ios_vlan:

vlan_id: 7

name: corporate-vlan

interfaces:

- GigabitEthernet0/4

Which two statements describe the configuration of the switch after the Ansible script is run? (Choose two.)

Correct. Gi0/1 is configured as a trunk with trunk_allowed_vlans: 6-8, so VLAN 6 and VLAN 7 traffic from access ports assigned to those VLANs can be carried over Gi0/1 to another device. The trunk provides a path for those VLANs off-switch (or to another switch), assuming the far end is also trunking and permits the same VLANs.

Incorrect. Although the first task sets Gi0/1 to state: unconfigured, the very next task configures Gi0/1 with mode: trunk and state: present. In Ansible, tasks are applied in order, so the final configuration is the trunk configuration, not an unconfigured interface.

Correct. The ios_vlan task for vlan_id: 6 includes interfaces Gi0/2 and Gi0/3. This results in those interfaces being placed into VLAN 6 as access ports (typical IOS behavior when assigning a switchport to a VLAN without specifying trunking). Therefore Gi0/2 and Gi0/3 are access ports in VLAN 6.

Incorrect. A trunk on Gi0/1 does not enable traffic to flow between ports 0/2 to 0/5 by itself. Ports communicate at Layer 2 only if they are in the same VLAN. Here, Gi0/2 and Gi0/3 are in VLAN 6, Gi0/4 is in VLAN 7, and nothing is stated about Gi0/5. Inter-VLAN traffic would require routing, not just a trunk.

Incorrect. The playbook does not reference GigabitEthernet0/6 at all, and there is no configuration that would connect traffic from Gi0/2 and Gi0/3 specifically to Gi0/6. The only uplink-like configuration shown is the trunk on Gi0/1, which carries VLANs 6-8, not a mapping to Gi0/6.

Question Analysis

Core concept: This question tests Ansible network automation behavior (idempotent configuration) using Cisco IOS modules, specifically how ios_l2_interface and ios_vlan affect switchport mode/VLAN membership and what a trunk’s allowed VLAN list implies for traffic forwarding. Why the answer is correct: Task 1 sets GigabitEthernet0/1 to state: unconfigured, which removes L2 switchport configuration (effectively a clean baseline). Task 2 then explicitly configures Gi0/1 as a trunk with native VLAN 1 and allowed VLANs 6-8. That means VLANs 6, 7, and 8 are permitted to traverse Gi0/1 (tagged, except native VLAN 1 untagged). Therefore, any access ports placed into VLANs 6 or 7 can have their VLAN traffic carried out Gi0/1 toward another switch/router-on-a-stick, etc. This supports statement A. Task 3 creates VLAN 6 named guest-vlan and assigns interfaces Gi0/2 and Gi0/3 under the VLAN’s interface list. On Cisco IOS, assigning an interface to a VLAN via these automation modules results in those ports being configured as access ports in that VLAN (unless otherwise specified as trunk). Thus Gi0/2 and Gi0/3 become access ports for VLAN 6, supporting statement C. Key features / best practices: - The playbook is sequential: later tasks override earlier ones (unconfigured then present trunk). - Trunk allowed VLAN list controls which VLANs can be forwarded on the trunk; it does not magically bridge all ports together. - VLAN membership is per-VLAN broadcast domain; inter-VLAN traffic still requires L3 routing. Common misconceptions: - Confusing “trunk exists” with “all ports can talk to each other.” A trunk only carries VLANs between devices; it does not provide inter-VLAN connectivity. - Misreading “unconfigured” as the final state for Gi0/1; it is immediately reconfigured as a trunk in the next task. Exam tips: - Always evaluate Ansible tasks in order and determine the final intended state. - Remember: access ports in the same VLAN can communicate at Layer 2; different VLANs require Layer 3. - For trunk questions, focus on native VLAN and allowed VLANs to infer what traffic can traverse the link.

Want to practice all questions on the go?

Download Cloud Pass — includes practice tests, progress tracking & more.

Refer to the exhibit.

#!/bin/bash

read ndir

while [ -d "$ndir" ]

do

cd $ndir

done

mkdir $ndir

What is the action of the Bash script that is shown?

Incorrect. The script does not iterate over all directories in the current folder (there is no `for` loop, no glob like `*`, and no directory listing). It only uses a single user-provided directory name (`ndir`) and repeatedly checks for that specific directory name as it changes into it.

Correct. The script waits for input (`read ndir`). If a directory with that name exists, it `cd`s into it and repeats the check from the new location, effectively walking down a chain of nested directories with the same name. When it finds a level where that directory does not exist, it exits the loop and creates it with `mkdir $ndir`.

Incorrect. It does not always “go into the directory entered and create a new directory with the same name” at that same level. If the directory exists, it goes into it and keeps going deeper as long as another directory with the same name exists. The `mkdir` occurs only after the loop ends, at the deepest level reached.

Incorrect. `$ndir` is a variable expanded by the shell; it is not a literal directory name “$ndir”. Also, the script does not necessarily go into a directory called `$ndir` once and then create another `$ndir` alongside it; it may traverse multiple nested levels before creating the missing directory.

Question Analysis

Core concept: This question tests basic Bash control flow and filesystem tests used in automation scripts. Specifically: reading user input (`read`), testing whether a directory exists (`-d`), looping (`while`), changing directories (`cd`), and creating directories (`mkdir`). Why correct: The script first waits for user input with `read ndir`, storing the entered string in the variable `ndir`. Then it evaluates `while [ -d "$ndir" ]`: as long as a directory with that name exists relative to the current working directory, it executes the loop body. Inside the loop, `cd $ndir` changes into that directory, so the next test is performed one level deeper. When it reaches a location where no subdirectory with that same name exists, the loop stops and `mkdir $ndir` creates that missing directory there. Key features: The script follows a single user-supplied directory name, not all directories. Because `cd` changes the working directory, each subsequent `-d "$ndir"` test is relative to the new location. The final `mkdir` therefore occurs at the deepest reachable level in a chain of nested directories with the same name. Common misconceptions: A common mistake is to think the script creates the directory in the original starting folder, but the repeated `cd` changes where the final `mkdir` runs. Another misconception is that it loops through every directory in the current folder; it does not, because there is no iteration over a list of directory names. Exam tips: Remember that `read` pauses for user input, `-d` checks whether a path is an existing directory, and `cd` changes the context for all later relative path operations. On exam questions, always track the current working directory after each loop iteration before deciding where a file or directory will be created.

An application calls a REST API and expects a result set of more than 550 records, but each time the call is made, only 25 are returned. Which feature limits the amount of data that is returned by the API?

Pagination limits the number of records returned in a single API response by splitting results into pages (for example, 25 items per page). The client must request additional pages using parameters like page/limit, offset/limit, or by following a next-page link/token. A consistent return of exactly 25 records is a classic sign of a default page size.

A payload limit refers to a maximum message size, typically measured in bytes, and it can apply to requests, responses, or both depending on the API or gateway implementation. If a payload-size threshold were the issue, the behavior would usually depend on the total serialized size of the returned data rather than always stopping at exactly 25 records. APIs also more commonly signal payload-size problems with an error or require filtering, compression, or smaller queries. A fixed and repeatable record count is much more characteristic of pagination than of a payload-size control.

Service timeouts occur when the server or an intermediary cannot complete processing within a time threshold, often resulting in errors such as 408 Request Timeout or 504 Gateway Timeout. Timeouts do not normally produce a successful response with a predictable, fixed number of records; they more commonly cause failures or incomplete operations.

Rate limiting controls how many API calls a client can make in a given time window to protect the service. When exceeded, the API usually responds with 429 Too Many Requests and may include retry-after guidance. Rate limiting affects request frequency, not the number of records returned per successful request.

Question Analysis

Core concept: This question tests REST API response sizing controls, specifically pagination. Many APIs intentionally return results in “pages” (chunks) to protect the server, reduce response size, and improve client performance. Instead of returning all matching records in one response, the API returns a limited number per request and provides a mechanism to retrieve subsequent pages. Why the answer is correct: Getting exactly 25 records every time strongly indicates a default page size (often called limit/pageSize/per_page). When an application expects 550+ records but only receives 25, the API is likely paginating the collection endpoint and returning only the first page. To retrieve all records, the client must follow pagination controls: increment a page parameter (page=2, page=3), use an offset (offset=25, offset=50), or follow a “next” link provided in the response (HATEOAS-style). Cisco platform APIs commonly implement this pattern and may include metadata such as totalCount, hasMore, nextPage, or links.next. Key features / best practices: Pagination is typically implemented with: - Page number + page size (page, limit/pageSize) - Offset + limit - Cursor-based pagination (cursor/after token) for stable traversal Best practice is to read the API documentation for supported query parameters and to programmatically iterate until no “next” page exists. Also handle sorting/filtering consistently to avoid missing/duplicating items. Common misconceptions: - “Payload limit” sounds plausible, but payload limits usually relate to maximum request/response size in bytes, not a consistent record count like 25. - “Service timeouts” would more likely cause errors (408/504) or truncated/failed responses, not a clean, repeatable 25-item result. - “Rate limiting” restricts how many requests you can make per time window (429 Too Many Requests), not how many records are returned per request. Exam tips: When you see a fixed small number of returned items (10/20/25/100) from a list endpoint, think pagination first. Look for parameters like limit, offset, page, per_page, or response fields/headers indicating next links and total counts. In automation scripts, implement loops to fetch all pages and respect any rate-limit headers while doing so.

Which API is used to obtain data about voicemail ports?

Webex Teams (now generally referred to as Webex messaging APIs) is used to manage spaces, messages, memberships, and some user-related collaboration functions in the Webex cloud. It does not provide inventory/configuration data for CUCM voicemail ports, which are on-prem telephony objects tied to call routing and Unity Connection integration. Choosing this option is a common confusion between “voicemail” as a concept and Webex messaging services.

Cisco Unified Communications Manager is the correct choice because voicemail ports are telephony configuration objects represented in CUCM (often as voicemail port devices and associated directory numbers). CUCM exposes these details through administrative APIs such as AXL (SOAP), which is specifically intended for retrieving and provisioning CUCM configuration data. Therefore, to obtain data about voicemail ports, you query CUCM via its API.

Finesse Gadgets relate to Cisco Finesse, a contact center desktop platform (commonly used with UCCX/UCCE). Finesse APIs and gadgets focus on agent state, dialogs, call control, and desktop UI integrations. They do not provide authoritative configuration data for CUCM voicemail ports. This option may seem plausible if you associate “ports” with call handling, but it’s the wrong platform for voicemail port inventory.

Webex Devices APIs are used to manage and monitor Webex Room/Desk devices and related cloud-managed endpoints (status, configuration, xAPI commands). Voicemail ports are not Webex devices; they are CUCM telephony constructs used for voicemail integration. As a result, Webex Devices APIs cannot be used to obtain voicemail port configuration data in CUCM.

Question Analysis

Core Concept: This question tests knowledge of which Cisco platform/API exposes telephony infrastructure inventory and configuration details—specifically voicemail ports. In Cisco collaboration environments, voicemail ports are typically associated with Cisco Unity Connection integration and are represented/managed as voice mail ports (directory numbers/devices) on Cisco Unified Communications Manager (CUCM). Why the Answer is Correct: Cisco Unified Communications Manager provides APIs that allow you to query device and line configuration data, including voice mail ports. In automation contexts, this is commonly done via the AXL (Administrative XML) SOAP API, which is designed for provisioning and retrieving CUCM configuration objects (phones, gateways, route patterns, DNs, and voicemail port devices). Voicemail ports are CUCM-managed endpoints used for voicemail integration and call routing to Unity Connection; therefore, CUCM is the authoritative source for obtaining their configuration data. Key Features / Best Practices: - Use CUCM AXL to read objects such as devices (e.g., voicemail port device types) and directory numbers associated with voicemail ports. - AXL is SOAP/XML and typically requires an application user with the Standard AXL API Access role (or equivalent) and appropriate permissions. - For operational/state data (registrations, active calls), CUCM also has other interfaces (e.g., RIS/Serviceability), but “ports” as configuration objects are most directly retrieved via AXL. - In exam scenarios, “obtain data about ports/devices/lines in CUCM” usually maps to CUCM APIs, not Webex or contact-center UI APIs. Common Misconceptions: Candidates may confuse voicemail with messaging platforms (Webex Teams) or assume Webex cloud APIs manage voicemail ports. However, voicemail ports are a CUCM/Unity Connection telephony construct, not a Webex messaging construct. Similarly, Finesse is contact-center focused and does not manage CUCM voicemail port inventory. Exam Tips: When you see terms like “ports,” “directory numbers,” “devices,” “route patterns,” or “CUCM configuration,” think CUCM AXL (administrative/provisioning) rather than Webex APIs. Webex APIs are typically for cloud messaging, meetings, devices, and user/space management—not CUCM telephony port objects.

Which two statements are true about Cisco UCS Manager, Cisco UCS Director, or Cisco Intersight APIs? (Choose two.)

Incorrect. UCS Manager’s traditional API is the XML API, not JSON-centric. Authentication is not typically HTTP Basic Auth with Base64-encoded credentials in the header. Instead, UCSM automation generally performs an aaaLogin call and then uses the returned session cookie (outCookie) for subsequent requests. JSON and Basic Auth are common patterns elsewhere, which makes this option a plausible distractor.

Correct. Cisco UCS Director exposes a REST API that can work with either XML or JSON representations. UCSD commonly uses an API key/token approach for authentication, provided in HTTP headers, which supports stateless automation and integration with orchestration systems. This contrasts with UCS Manager’s session-cookie model and aligns with modern REST API consumption patterns.

Incorrect. Cisco Intersight is a RESTful API platform that is JSON-based rather than XML-based. Authentication uses an API key pair (Key ID and secret) to sign requests (HMAC-style signing), which is a modern approach for SaaS APIs. The “requires an API key pair” part is true, but the “uses XML” encoding makes the statement wrong overall.

Correct. UCS Manager API interactions are classically XML-encoded (the UCS XML API). Authentication is session-based: you log in (aaaLogin) and receive a cookie/token (often called outCookie) that must be included with subsequent method calls to authorize them. The wording “cookie in the method” reflects that UCSM API calls carry the cookie value as part of the request payload/parameters.

Incorrect. Intersight API interactions are JSON-based; it does not generally support XML encoding. While it does use API keys in HTTP headers, it specifically uses an API key pair with signed requests (not simply a single API key header in the simplistic sense). The “XML or JSON” portion is the key error and makes the statement false.

Question Analysis

Core concept: This question tests how three Cisco compute/management platforms expose and secure their APIs: Cisco UCS Manager (UCSM), Cisco UCS Director (UCSD), and Cisco Intersight. For the exam, you must know (1) the data encoding format (XML vs JSON) and (2) the authentication mechanism (cookie/session vs API keys). Why the answers are correct: UCS Manager’s classic API is the XML API. Programmatic access typically starts with an AAA login call (aaaLogin) that returns a session cookie (often referenced as an “outCookie”). Subsequent API calls must include that cookie to authenticate the session. That matches option D’s key idea: XML-encoded interactions and cookie-based session authentication. UCS Director provides a REST API that supports both XML and JSON payloads/representations. UCSD commonly uses an API key (or token) presented in the HTTP header to authenticate requests rather than UCSM-style session cookies. That aligns with option B: XML- or JSON-encoded interactions and API key in the HTTP header. Key features / best practices: - UCSM: Think “XML + session cookie.” Automation tools often maintain the cookie for the duration of a workflow, then explicitly log out. This is common in older enterprise management APIs. - UCSD: Think “REST + XML/JSON + API key.” Header-based auth is easier for stateless automation and integration with external orchestrators. - Intersight: Modern SaaS/hybrid management uses REST with JSON and an API key pair (API Key ID + secret used to sign requests). This is a key differentiator from UCSM/UCSD. Common misconceptions: A frequent trap is assuming everything modern uses JSON + Basic Auth (Base64 credentials). UCSM does not primarily use JSON nor Basic Auth; it uses its XML API and session cookie. Another trap is thinking Intersight supports XML; it is JSON-centric and uses signed requests with an API key pair, not XML. Exam tips: Memorize quick associations: UCSM = XML + cookie session; UCSD = REST + XML/JSON + API key header; Intersight = REST/JSON + API key pair (signed). When you see “cookie,” strongly suspect UCSM; when you see “API key pair/signature,” suspect Intersight.

What is the purpose of the Cisco VIRL software tool?

Incorrect. Verifying configurations against compliance standards is typically done with compliance/policy tools (e.g., Cisco NSO compliance features, Cisco DNA Center assurance/policy, or third-party config compliance platforms). VIRL/CML does not primarily evaluate configs against regulatory baselines; it provides a simulated environment to run and observe network behavior.

Incorrect. Automating API workflows is the role of orchestration/automation tools (e.g., Cisco NSO, Ansible Tower/AWX, CI/CD pipelines, or workflow engines). VIRL/CML may offer APIs to control labs, but that is supportive functionality; the main purpose is to host simulated network topologies for testing and learning.

Correct. Cisco VIRL, later rebranded as Cisco Modeling Labs, is built to simulate and model network topologies using virtual Cisco device images. It allows users to create realistic lab environments with routers, switches, and other nodes without needing physical hardware. This makes it valuable for testing routing behavior, validating configurations, and experimenting with automation scripts in an isolated environment. In certification and development contexts, its defining purpose is network simulation and modeling rather than compliance checking, workflow orchestration, or application benchmarking.

Incorrect. Testing performance of an application is generally handled by application performance management (APM) and load-testing tools (e.g., AppDynamics, JMeter, LoadRunner). VIRL/CML focuses on network device simulation and topology modeling, not benchmarking application response times or generating application load.

Question Analysis

Core Concept: Cisco VIRL (Virtual Internet Routing Lab), later evolved into Cisco Modeling Labs (CML), is a network simulation and modeling platform. It is used to build virtual topologies using Cisco virtual images (IOSv, IOSvL2, NX-OSv, CSR1000v, etc.) and to validate designs and configurations in a safe, repeatable lab environment. Why the Answer is Correct: Option C is correct because VIRL’s primary purpose is to simulate and model networks. You can create multi-device topologies, interconnect them virtually, run routing/switching features, and observe control-plane and data-plane behavior without needing physical hardware. This is especially relevant to automation studies because it enables rapid prototyping and testing of configuration changes and automation scripts against realistic network behaviors. Key Features / Best Practices: VIRL/CML provides a topology editor, a simulation engine, and integration with automation tooling. Common capabilities include: importing/exporting topologies, running multiple Cisco node types, capturing packets, and using APIs to programmatically start/stop labs. It also supports “day-0/day-1” style configuration injection (e.g., initial configs) so you can test templating tools (Ansible, Python/Netmiko, RESTCONF/NETCONF clients) in a controlled environment. Best practice is to use VIRL/CML as a pre-production validation step: test new routing policies, ACLs, or automation playbooks before deploying to production. Common Misconceptions: Some candidates confuse VIRL with compliance tools (which check configs against standards) or with workflow automation platforms. While VIRL can be used alongside automation and can expose APIs, its core function is not “automate API workflows” but to provide the simulated network environment where those workflows can be tested. Exam Tips: For CCNAAUTO-style questions, map tools to their primary role: VIRL/CML = modeling/simulation lab; compliance tools = policy/config validation; orchestration/workflow tools = automate processes; APM tools = application performance testing. If the question mentions virtual topologies, router/switch images, or lab simulation, the answer is VIRL/CML.

Want to practice all questions on the go?

Get the app

Download Cloud Pass — includes practice tests, progress tracking & more.