Practice Test #2

Simulez l'expérience réelle de l'examen avec 50 questions et une limite de temps de 100 minutes. Entraînez-vous avec des réponses vérifiées par IA et des explications détaillées.

Propulsé par l'IA

Réponses et explications vérifiées par triple IA

Chaque réponse est vérifiée par 3 modèles d'IA de pointe pour garantir une précision maximale. Obtenez des explications détaillées par option et une analyse approfondie des questions.

Questions d'entraînement

Your on-premises network contains an SMB share named Share1. You have an Azure subscription that contains the following resources: ✑ A web app named webapp1 ✑ A virtual network named VNET1 You need to ensure that webapp1 can connect to Share1. What should you deploy?

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution. After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen. You have an Azure subscription named Subscription1. Subscription1 contains a resource group named RG1. RG1 contains resources that were deployed by using templates. You need to view the date and time when the resources were created in RG1. Solution: From the Subscriptions blade, you select the subscription, and then click Programmatic deployment. Does this meet the goal?

You need to deploy an Azure virtual machine scale set that contains five instances as quickly as possible. What should you do?

Your company has three offices. The offices are located in Miami, Los Angeles, and New York. Each office contains datacenter. You have an Azure subscription that contains resources in the East US and West US Azure regions. Each region contains a virtual network. The virtual networks are peered. You need to connect the datacenters to the subscription. The solution must minimize network latency between the datacenters. What should you create?

You have an Azure web app named webapp1. You have a virtual network named VNET1 and an Azure virtual machine named VM1 that hosts a MySQL database. VM1 connects to VNET1. You need to ensure that webapp1 can access the data hosted on VM1. What should you do?

Envie de vous entraîner partout ?

Téléchargez Cloud Pass — inclut des tests d'entraînement, le suivi de progression et plus encore.

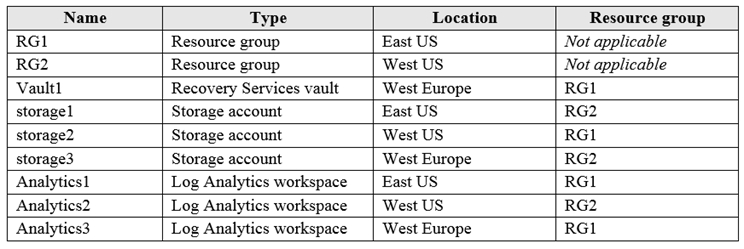

HOTSPOT - You have an Azure subscription named Subscription1 that contains the resources shown in the following table:

You plan to configure Azure Backup reports for Vault1. You are configuring the Diagnostics settings for the AzureBackupReports log. Which storage accounts and which Log Analytics workspaces can you use for the Azure Backup reports of Vault1? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point. Hot Area:

Storage accounts: ______

Log Analytics workspaces: ______

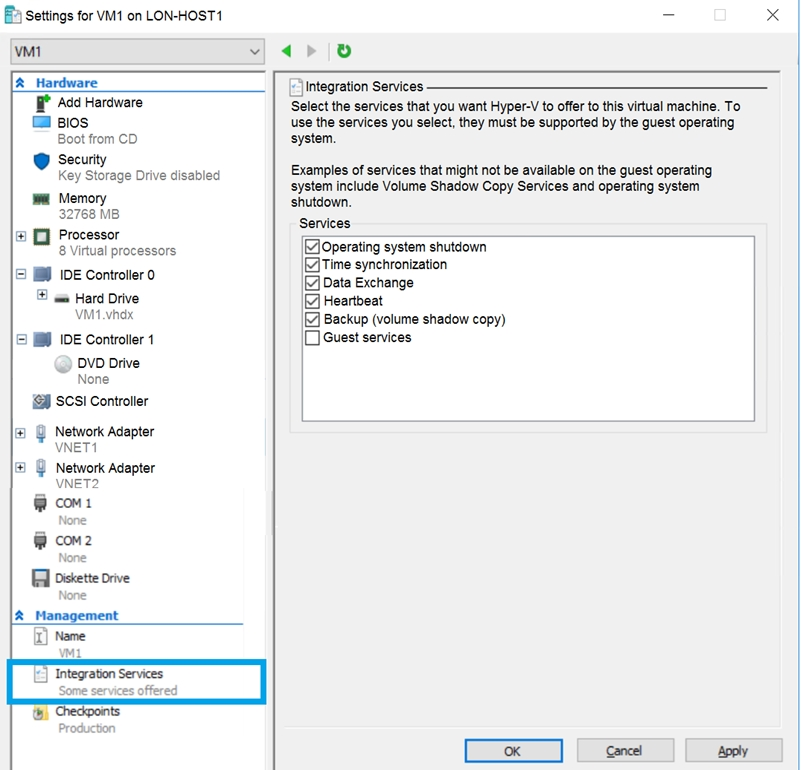

You have an Azure subscription. You have an on-premises virtual machine named VM1. The settings for VM1 are shown in the exhibit. (Click the Exhibit tab.)

You need to ensure that you can use the disks attached to VM1 as a template for Azure virtual machines. What should you modify on VM1?

Select the correct answer(s) in the image below.

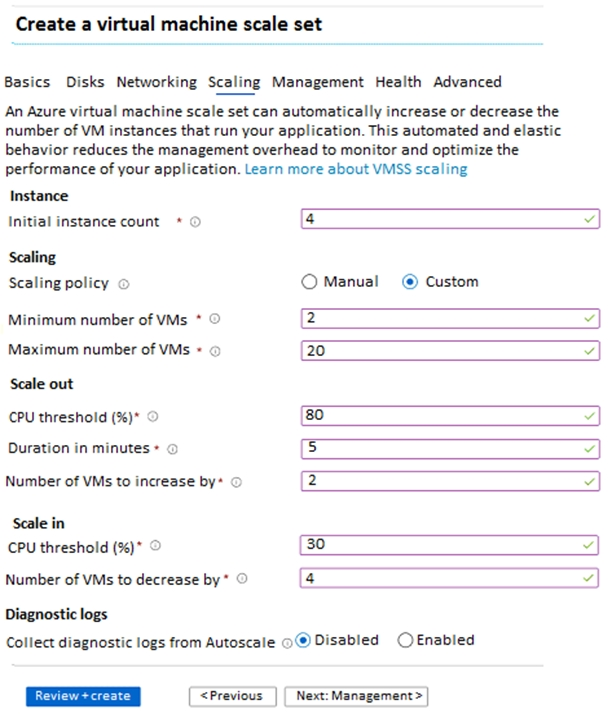

HOTSPOT - You create a virtual machine scale set named Scale1. Scale1 is configured as shown in the following exhibit.

Use the drop-down menus to select the answer choice that completes each statement based on the information presented in the graphic. NOTE: Each correct selection is worth one point. Hot Area:

Select the correct answer(s) in the image below.

If Scale1 is utilized at 85 percent for six minutes after it is deployed, Scale1 will be running ______.

If Scale1 is first utilized at 25 percent for six minutes after it is deployed, and then utilized at 50 percent for six minutes, Scale1 will be running ______.

HOTSPOT - You plan to create an Azure Storage account in the Azure region of East US 2. You need to create a storage account that meets the following requirements: ✑ Replicates synchronously. ✑ Remains available if a single data center in the region fails. How should you configure the storage account? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point. Hot Area:

Replication: ______

Account type: ______

HOTSPOT - You have an Azure File sync group that has the endpoints shown in the following table.

Cloud tiering is enabled for Endpoint3. You add a file named File1 to Endpoint1 and a file named File2 to Endpoint2. On which endpoints will File1 and File2 be available within 24 hours of adding the files? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point. Hot Area:

Endpoint1 is a Cloud endpoint.

Endpoint2 is a Server endpoint.

Endpoint3 is a Server endpoint.

File1: ______

File2: ______

Autres tests d'entraînement

Practice Test #1

Practice Test #3

Practice Test #4

Practice Test #5

Practice Test #6

Practice Test #7

Practice Test #8

Practice Test #9

Obtenir l'application