Databricks

Databricks Certified Generative AI Engineer Associate: Certified Generative AI Engineer Associate

87+ 기출 문제 (AI 검증 답안 포함)

AI 기반

3중 AI 검증 답안 및 해설

모든 Databricks Certified Generative AI Engineer Associate: Certified Generative AI Engineer Associate 답안은 3개의 최고 AI 모델로 교차 검증하여 최고의 정확도를 보장합니다. 선택지별 상세 해설과 심층 문제 분석을 제공합니다.

시험 도메인

실전 문제

A Generative Al Engineer has created a RAG application to look up answers to questions about a series of fantasy novels that are being asked on the author’s web forum. The fantasy novel texts are chunked and embedded into a vector store with metadata (page number, chapter number, book title), retrieved with the user’s query, and provided to an LLM for response generation. The Generative AI Engineer used their intuition to pick the chunking strategy and associated configurations but now wants to more methodically choose the best values. Which TWO strategies should the Generative AI Engineer take to optimize their chunking strategy and parameters? (Choose two.)

Changing embedding models may improve semantic search, but it does not directly optimize chunking strategy/parameters. It also introduces a confounding factor: if performance changes, you can’t attribute the improvement to chunking. A methodical chunking optimization keeps the embedding model fixed while sweeping chunk size/overlap/splitting rules and measuring retrieval or end-to-end quality.

A query classifier to predict the best book and filter retrieval can reduce search space and improve precision, but it’s a separate architectural enhancement rather than chunking optimization. It also adds failure modes (misclassification) and doesn’t help determine the best chunk size/overlap or splitting boundaries. The question asks specifically for optimizing chunking strategy and parameters.

This is the classic, rigorous approach: choose an IR evaluation metric (Recall@k, NDCG, MRR, etc.) and run experiments varying chunking (paragraph vs. chapter splits, chunk size, overlap). Chunking affects whether relevant evidence is retrievable and how much noise is returned. Selecting the configuration that maximizes the metric is a repeatable, data-driven way to tune chunking.

Having an LLM suggest an ideal token count is not grounded in measured retrieval performance. Chunk size should be chosen based on whether the retriever returns the right evidence and supports grounded answers, not on a model’s intuition about token counts. This approach also ignores overlap, boundary effects, and the fact that optimal chunking depends on the corpus structure and question types.

LLM-as-a-judge is a practical evaluation method when labeled relevance data is limited. You can score whether retrieved chunks are the most appropriate evidence for known questions (and/or whether answers are grounded in retrieved text). By comparing judge scores across chunking configurations, you can systematically optimize chunk size, overlap, and splitting heuristics based on an explicit metric.

문제 분석

Core concept: This question tests systematic evaluation and tuning of Retrieval-Augmented Generation (RAG) retrieval quality, specifically chunking strategy (how to split documents) and chunking parameters (chunk size, overlap, separators). In Databricks GenAI workflows, chunking is treated as a retriever hyperparameter that should be optimized using offline evaluation (ground-truth Q/A sets) and/or model-based judging. Why the answers are correct: C is correct because the most defensible way to optimize chunking is to define an information-retrieval metric (e.g., Recall@k, Precision@k, MRR, NDCG) and run controlled experiments varying chunk boundaries (paragraphs, sections, chapters), chunk size, and overlap. Chunking directly affects whether the retriever returns the passages that contain the answer and how much irrelevant text is included. Using retrieval metrics on a labeled set of questions with known relevant passages provides objective, repeatable selection of the best configuration. E is correct because LLM-as-a-judge is a practical evaluation approach when exact relevance labels are expensive. You can have an LLM score whether retrieved chunks are the “most appropriate” evidence for answering historical forum questions (or a curated eval set). This produces a scalar metric that can be optimized across chunking configurations. It aligns with modern RAG evaluation practices: judge the evidence quality and/or answer groundedness, then tune chunking to maximize those scores. Key features / best practices: - Build an evaluation dataset: questions + expected answer or expected source passages. - Evaluate retrieval separately from generation (retrieval metrics) and optionally end-to-end (judge answer quality/groundedness). - Sweep chunk size/overlap and splitting heuristics; keep other variables fixed to isolate chunking impact. - Use consistent k (top-k) and reranking settings during experiments. Common misconceptions: - Changing embedding models (A) can improve retrieval, but it does not methodically optimize chunking parameters; it confounds variables. - Query classification and metadata filtering (B) can help retrieval, but it’s a different optimization lever than chunking. - Asking an LLM for “best token count” (D) is not an evaluation; it guesses a size without measuring retrieval success. Exam tips: When asked to “methodically choose best chunking values,” look for answers involving (1) explicit evaluation metrics and (2) systematic experimentation/ablation. Databricks exam questions often distinguish between tuning retrieval (chunking, embeddings, indexing) and adding pipeline complexity (classifiers/filters) that doesn’t directly validate chunking quality.

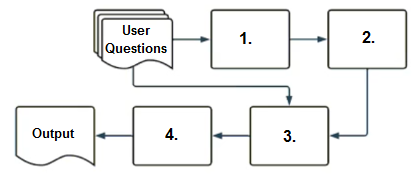

A company has a typical RAG-enabled, customer-facing chatbot on its website.

Select the correct sequence of components a user's questions will go through before the final output is returned. Use the diagram above for reference.

Correct. Standard RAG flow is: (1) embed the user query with an embedding model, (2) run vector similarity search over the vector index to retrieve relevant chunks, (3) build a context-augmented prompt by inserting retrieved passages plus instructions and the question, and (4) send that prompt to the response-generating LLM to produce the final answer.

Incorrect. A context-augmented prompt cannot be constructed before retrieval because the “context” comes from retrieved documents/chunks. Also, vector search requires a query embedding, so placing the embedding model after vector search breaks the dependency order. This sequence reverses key prerequisites in the RAG pipeline.

Incorrect. Putting the response-generating LLM first implies generation happens before retrieval and prompt augmentation, which is not the typical RAG pattern. Additionally, embeddings are required before vector search, and prompt augmentation must occur before the LLM generates the final response. This ordering is fundamentally inconsistent with standard RAG.

Incorrect. This places the response-generating LLM first and vector search later, which contradicts the core idea of RAG (retrieve context before generating). It also places context-augmented prompt before vector search, but the prompt can only be augmented after retrieval. The dependencies are out of order.

문제 분석

Core concept: This question tests the standard Retrieval-Augmented Generation (RAG) request flow: convert the user query to an embedding, retrieve relevant chunks via vector search, assemble a context-augmented prompt, then generate the final answer with an LLM. Why the answer is correct: In a typical RAG chatbot, the user’s question first goes to an embedding model to produce a dense vector representation of the query. That vector is used to perform vector search (similarity search) against a vector index built from embedded documents/chunks. The retrieved passages are then inserted into a context-augmented prompt (often with system instructions, citations formatting rules, and the user question). Finally, the response-generating LLM consumes that prompt and produces the natural-language output. This matches option A’s sequence: 1) embedding model, 2) vector search, 3) context-augmented prompt, 4) response-generating LLM. Key features / best practices (Databricks context): - Embeddings: Use a consistent embedding model for both indexing and querying; store embeddings in a vector index (e.g., Databricks Vector Search). - Retrieval: Tune top-k, filters (metadata constraints), and chunking strategy to improve relevance and reduce hallucinations. - Prompt assembly: Include retrieved context, instructions to ground answers in sources, and optionally require citations; keep within token limits. - Generation: Use an instruction-tuned LLM endpoint (e.g., Model Serving) and set decoding parameters appropriately. Common misconceptions: - Thinking the LLM runs first to “decide what to retrieve.” While some advanced agentic patterns do this, the canonical RAG pipeline embeds and retrieves before generation. - Swapping embedding and vector search: vector search requires the query embedding; retrieval cannot happen before embedding. Exam tips: When you see “typical RAG-enabled chatbot,” default to: Query -> Embed -> Retrieve -> Augment prompt -> Generate. If an option places vector search before embeddings or places prompt augmentation before retrieval, it’s almost certainly incorrect for standard RAG.

A Generative Al Engineer interfaces with an LLM with prompt/response behavior that has been trained on customer calls inquiring about product availability. The LLM is designed to output “In Stock” if the product is available or only the term “Out of Stock” if not. Which prompt will work to allow the engineer to respond to call classification labels correctly?

Incorrect. This prompt ties the label “In Stock” to the customer asking for a product, not to whether the product is actually available. In availability inquiries, the customer will almost always ask about a product, so this would systematically mislabel out-of-stock cases. It also fails to define the decision rule based on inventory status.

Incorrect. While JSON formatting is often useful for application integration, it violates the stated constraint that the LLM is designed to output only the term “In Stock” or only the term “Out of Stock.” Adding JSON introduces extra tokens and structure, likely breaking downstream expectations and evaluation that rely on exact label matching.

Incorrect. Like option A, it incorrectly maps the label to the act of asking for a product rather than the product’s availability. This would misclassify in-stock items as out-of-stock. It also provides no conditional logic about availability, so it cannot support correct classification behavior.

Correct. It clearly describes the input (customer call transcript about availability) and provides the exact conditional mapping: output “In Stock” if available, otherwise “Out of Stock.” This aligns with the model’s trained behavior and keeps the output constrained to the two valid labels without adding extra formatting that could cause noncompliant outputs.

문제 분석

Core Concept: This question tests prompt design for constrained classification outputs. When an LLM is fine-tuned (or instruction-tuned) to emit only specific labels (here, exactly “In Stock” or “Out of Stock”), the prompt must align with that contract: clearly define the task, the decision rule, and the allowed outputs, without introducing conflicting formatting requirements. Why the Answer is Correct: Option D explicitly instructs the model to read a customer call transcript about product availability and to respond with exactly one of the two allowed labels based on availability. This matches the model’s trained prompt/response behavior and preserves the expected output space. It also avoids adding extra structure (like JSON) or additional text that could cause the model to deviate from the strict label-only requirement. Key Features / Best Practices: A strong classification prompt should: 1) Provide the input type (call transcript) and intent (availability inquiry). 2) Specify the mapping from condition to label. 3) Constrain outputs to an enumerated set (two labels) and ideally require “output only the label.” In Databricks GenAI application patterns, this is a common approach when building evaluable, deterministic-ish labelers for downstream pipelines (e.g., storing labels, computing accuracy, or triggering workflows). Keeping outputs simple also improves evaluation/monitoring because parsing is trivial and reduces formatting errors. Common Misconceptions: Option B looks attractive because structured outputs (JSON) are often recommended. However, it conflicts with the requirement that the LLM output only “In Stock” or “Out of Stock.” If downstream systems or evaluation expect a single token/label, JSON will break parsing and metrics. Options A and C are flawed because they ignore the availability condition and instead tie the label to whether the customer asks for a product (which is always true in this scenario), producing incorrect classifications. Exam Tips: When a question states the model is designed to output only specific labels, do not add extra formatting unless explicitly allowed. Prefer prompts that (a) restate the task, (b) list the allowed outputs, and (c) instruct “respond with only one of these labels.” This is especially important for automated evaluation and monitoring pipelines.

When developing an LLM application, it’s crucial to ensure that the data used for training the model complies with licensing requirements to avoid legal risks. Which action is NOT appropriate to avoid legal risks?

Contacting the data curators before using the trained model is generally a more appropriate step than waiting until after use has begun. While simply notifying them is not the same as obtaining formal permission, early outreach can help clarify licensing terms, request authorization, and document due diligence before legal exposure occurs. This makes it a risk-reduction action rather than the clearly inappropriate choice. On exams, actions taken before use are usually safer than actions taken after use.

Using data that you personally created and that is completely original is an appropriate way to reduce licensing risk. If you truly own the content and it does not incorporate third-party protected material, you control the rights and can decide how it may be used or licensed. This is one of the safest sources of training data from a legal perspective. The main caveat is ensuring the material is genuinely original and not derived from copyrighted or restricted sources.

Using only data that is explicitly labeled with an open license and then following the license terms is a standard best practice for avoiding legal risk. Openly licensed data provides clear permission boundaries, and compliance with requirements such as attribution, redistribution limits, or commercial-use restrictions helps maintain lawful use. This approach supports governance, auditability, and defensible model development practices. It is therefore an appropriate action, not the one being asked for.

Reaching out to the data curators only after you have already started using the trained model is not an appropriate way to avoid legal risk. By that point, any unauthorized use of the data may already have occurred during collection, training, or deployment, so the legal exposure is already present. Proper risk mitigation requires confirming rights, permissions, or license terms before using the data, not after the fact. Post-use notification is not a substitute for prior authorization or documented licensing compliance.

문제 분석

Core concept: This question is about reducing legal risk when selecting training data for LLM applications by ensuring proper licensing, provenance, and permission before use. Why correct: contacting data curators only after you have already begun using the trained model does not prevent legal risk, because any unauthorized use may already have occurred. Key features: appropriate risk-reduction actions include using original data you own, using data with explicit open licenses, and obtaining clarification or permission before use when licensing is unclear. Common misconceptions: many people assume that notifying a curator after the fact is enough, but retroactive communication does not substitute for prior rights verification or permission. Exam tips: for licensing questions, prefer actions that establish rights before training or deployment, and avoid choices that rely on informal or delayed approval.

A Generative AI Engineer is developing a chatbot designed to assist users with insurance-related queries. The chatbot is built on a large language model (LLM) and is conversational. However, to maintain the chatbot’s focus and to comply with company policy, it must not provide responses to questions about politics. Instead, when presented with political inquiries, the chatbot should respond with a standard message: “Sorry, I cannot answer that. I am a chatbot that can only answer questions around insurance.” Which framework type should be implemented to solve this?

Safety guardrails are designed to prevent the model from generating harmful or unsafe content (e.g., hate, harassment, self-harm instructions, explicit sexual content, violence). While politics can be sensitive, the requirement here is not about preventing harm categories; it is about refusing an out-of-domain topic and keeping the assistant focused on insurance. That aligns better with contextual/topic guardrails than safety guardrails.

Security guardrails focus on protecting systems and data: preventing prompt injection, blocking requests for secrets/credentials, stopping data exfiltration, and enforcing access controls. The prompt does not mention sensitive data, system prompts, or unauthorized actions. The issue is topical scope control (no politics), which is not primarily a security concern, so this option is not the best fit.

Contextual guardrails constrain the assistant to an intended domain and conversation context. They commonly use topic/intent classification and allow/deny policies to detect out-of-scope queries (like politics) and return a standard refusal or redirection message. This exactly matches the requirement: the chatbot should only answer insurance questions and must refuse political inquiries with a fixed response.

Compliance guardrails address legal and regulatory requirements such as handling PII, retention, auditability, and industry regulations (e.g., HIPAA, GDPR). Although the question mentions “company policy,” the policy described is a scope restriction (no politics), not a regulatory compliance requirement. Therefore, a contextual guardrail is more appropriate than a compliance guardrail.

문제 분석

Core concept: This question tests understanding of LLM guardrails used in chatbot application development. Guardrails are controls applied to prompts, model inputs/outputs, and conversation state to keep responses within intended scope and policy. A key category is “contextual” or “topic” guardrails, which constrain what the assistant is allowed to discuss. Why the answer is correct: The requirement is not primarily about harmful content, data exfiltration, or regulatory retention; it is about keeping the chatbot focused on insurance and refusing an out-of-scope topic (politics) with a fixed refusal message. That is a classic contextual guardrail: detect when the user’s query falls outside the allowed domain (insurance) and route to a refusal/deflection response. This can be implemented via topic classification (e.g., a lightweight classifier or LLM-based moderation prompt), allow/deny lists of topics, and response templates. Key features / how it’s implemented: A contextual guardrail typically includes (1) intent/topic detection (insurance vs. politics), (2) a policy decision (block/redirect), and (3) a deterministic response (the standard message). In Databricks-centric architectures, this is often implemented in the application layer around the model call (pre-check the user message and/or post-check the model output), potentially using an LLM-as-judge or a smaller model for classification. Best practice is to make the refusal message consistent, avoid engaging on the blocked topic, and log guardrail triggers for monitoring. Common misconceptions: “Safety guardrail” can sound right because politics can be sensitive, but safety guardrails usually focus on preventing harmful, hateful, self-harm, sexual, or violence content. “Security guardrail” relates to prompt injection, secrets, and data leakage. “Compliance guardrail” is about legal/regulatory requirements (PII handling, retention, audit). Here, the policy is simply: stay in-domain (insurance) and refuse out-of-domain (politics). Exam tips: When the question emphasizes staying on-topic, domain restriction, or refusing out-of-scope requests with a standard response, choose contextual guardrails. If it emphasizes harmful content categories, choose safety. If it emphasizes data leakage/prompt injection, choose security. If it emphasizes regulatory obligations (PII, HIPAA, GDPR), choose compliance.

이동 중에도 모든 문제를 풀고 싶으신가요?

Cloud Pass를 다운로드하세요 — 모의고사, 학습 진도 추적 등을 제공합니다.

A Generative Al Engineer is responsible for developing a chatbot to enable their company’s internal HelpDesk Call Center team to more quickly find related tickets and provide resolution. While creating the GenAI application work breakdown tasks for this project, they realize they need to start planning which data sources (either Unity Catalog volume or Delta table) they could choose for this application. They have collected several candidate data sources for consideration: call_rep_history: a Delta table with primary keys representative_id, call_id. This table is maintained to calculate representatives’ call resolution from fields call_duration and call start_time. transcript Volume: a Unity Catalog Volume of all recordings as a *.wav files, but also a text transcript as *.txt files. call_cust_history: a Delta table with primary keys customer_id, cal1_id. This table is maintained to calculate how much internal customers use the HelpDesk to make sure that the charge back model is consistent with actual service use. call_detail: a Delta table that includes a snapshot of all call details updated hourly. It includes root_cause and resolution fields, but those fields may be empty for calls that are still active. maintenance_schedule – a Delta table that includes a listing of both HelpDesk application outages as well as planned upcoming maintenance downtimes. They need sources that could add context to best identify ticket root cause and resolution. Which TWO sources do that? (Choose two.)

call_cust_history is designed for internal customer usage and chargeback consistency. Its primary keys and purpose indicate it tracks who used the HelpDesk and how often, not what the issue was or how it was resolved. It may help with reporting, prioritization, or cost allocation, but it provides little direct semantic or labeled information for root cause and resolution retrieval.

maintenance_schedule lists outages and planned maintenance windows. This can provide situational context (e.g., many tickets during an outage), but it typically does not contain per-ticket troubleshooting steps or resolutions. It is better used as a filter or enrichment feature (“was there an outage at that time?”) rather than a primary knowledge source for identifying root cause and resolution.

call_rep_history is maintained to calculate representative call resolution performance using call_duration and call_start_time. This is operational KPI data about agents, not the content of incidents. It lacks the descriptive problem statements and fix details needed for a chatbot to find related tickets and recommend resolutions, so it is not a strong RAG knowledge source.

call_detail is a strong source because it includes root_cause and resolution fields, which directly match the chatbot’s objective. Even though those fields may be empty for active calls, completed calls will provide labeled outcomes that can be retrieved as canonical answers or used to supervise evaluation. Hourly updates also support near-real-time relevance for the HelpDesk team.

transcript Volume contains recordings and, crucially, text transcripts. The transcript text is ideal for semantic search and RAG because it captures the user’s symptoms, environment, troubleshooting dialogue, and resolution steps in natural language. In Databricks, these files can be ingested from Unity Catalog Volumes, chunked, embedded, and indexed to retrieve similar historical interactions by meaning.

문제 분석

Core concept: This question tests data-source selection for a GenAI/RAG-style chatbot in Databricks. The goal is to choose sources that provide high-signal context for identifying ticket/call root cause and resolution. In practice, that means selecting data that either (1) explicitly contains root cause/resolution labels or (2) contains rich unstructured narrative describing the issue and fix, which can be embedded and retrieved. Why the answer is correct: The best two sources are call_detail (D) and transcript Volume (E). call_detail contains structured fields root_cause and resolution, which are directly aligned to the chatbot’s objective: retrieving similar historical issues and recommended fixes. Even if those fields are sometimes empty for active calls, the table still provides the authoritative structured outcomes for completed calls and other call metadata useful for filtering (time, category, status). The transcript Volume provides the unstructured conversational content (audio plus text transcripts). The text transcripts are especially valuable for semantic search: they capture symptoms, troubleshooting steps, environment details, and user language that often do not fit neatly into structured columns. In Databricks, these transcripts are ideal for chunking, embedding, and indexing in a vector search index to retrieve “related tickets” by meaning rather than exact keywords. Key features / best practices: Use Delta tables for reliable structured labels (root_cause/resolution) and Volumes for file-based unstructured corpora (transcripts). For RAG, prefer sources with high semantic density and direct relevance to the answer. Combine structured retrieval (filters on call_detail) with vector retrieval over transcript text. Consider incremental ingestion (hourly updates) and handling missing labels by excluding active calls or using status filters. Common misconceptions: maintenance_schedule can seem relevant because outages cause incidents, but it is indirect context and not the primary source of root cause/resolution for individual tickets. call_rep_history and call_cust_history are operational/analytics tables (performance and chargeback) and do not describe what broke or how it was fixed. Exam tips: When asked for “context to identify root cause and resolution,” prioritize (1) explicit resolution fields and (2) narrative text that describes the problem/steps. Metrics/usage tables rarely help RAG answer “how to fix it.”

A Generative Al Engineer is creating an LLM-based application. The documents for its retriever have been chunked to a maximum of 512 tokens each. The Generative Al Engineer knows that cost and latency are more important than quality for this application. They have several context length levels to choose from. Which will fulfill their need?

Option A is the smallest context window that can realistically handle a 512-token retrieved chunk while still leaving at least minimal room for prompt overhead. In an LLM application, the input is not just the chunk itself; it also includes the user query, system instructions, separators, and any retrieval template text. Because cost and latency are more important than quality, the best choice is the smallest viable context length rather than a much larger one. Although A is slightly larger than D in model size and embedding dimension, it is still dramatically smaller and cheaper than B or C while remaining functionally usable.

Option B provides far more context than needed for a single 512-token chunk and therefore does not align with the stated priority of minimizing cost and latency. Its 11GB smallest model size is substantially larger than A, which implies higher serving cost, greater memory requirements, and slower inference. The much larger embedding dimension of 2560 also increases vector storage and retrieval computation. This option would only make sense if the application needed to include many chunks or much longer prompts.

Option C is massively oversized for the described use case, with a 32768-token context window that is unnecessary for 512-token chunks. The associated 14GB model and 4096-dimensional embeddings would significantly increase infrastructure cost, latency, and index size. Nothing in the prompt suggests a need for very long-context reasoning or many retrieved passages in one request. As a result, this option is the least aligned with the requirement to prioritize efficiency over quality.

Option D appears attractive because its context length matches the chunk size exactly and it has the smallest model and embedding dimension. However, a 512-token context window cannot practically fit a 512-token chunk plus the user question, system prompt, delimiters, and any other prompt formatting. That means it does not fully satisfy the functional requirement of running the application with retrieved context. The smallest option that actually leaves room for minimal overhead is A, so D is too tight despite its lower cost profile.

문제 분석

Core concept: choose the smallest context window that can fit the retrieved chunk and still leave room for the rest of the prompt, because smaller context windows and models generally reduce inference cost and latency. Why correct: with documents chunked to a maximum of 512 tokens, a model with exactly 512 tokens of context cannot practically accept the chunk plus the user question and prompt scaffolding. Key features: option A provides the smallest usable context above 512 and is still far smaller and cheaper than the larger 2048 and 32768 context alternatives. Common misconceptions: matching context length exactly to chunk size is not enough in real prompting, because every request includes additional tokens beyond the retrieved text. Exam tips: when optimizing for cost and latency, pick the smallest option that still satisfies the full prompt requirement, not just the chunk size in isolation.

A Generative AI Engineer is designing a RAG application for answering user questions on technical regulations as they learn a new sport. What are the steps needed to build this RAG application and deploy it?

Incorrect ordering: it places “Evaluate model” before “LLM generates a response.” In RAG, evaluation typically measures the quality of generated answers (and their grounding in retrieved context), so you need the generation step before you can evaluate end-to-end performance. The rest of the flow (ingest → index → query → retrieve → generate → deploy) is mostly correct, but the evaluation placement makes this option wrong.

This option gives the canonical end-to-end RAG workflow: ingest documents, index them in Vector Search, accept a user query, retrieve relevant context, generate an answer with the LLM, evaluate the resulting system, and then deploy it with Model Serving. That ordering reflects the two major phases of RAG systems: offline corpus preparation and online retrieval-augmented inference. It also correctly places retrieval before generation, which is essential because the LLM needs grounded context to answer technical regulation questions accurately. After the pipeline is assembled, evaluation is performed to assess answer quality and retrieval effectiveness before promoting the application to production.

Incomplete: it only covers ingestion, indexing, evaluation, and deployment, but omits the core online RAG steps (user query, retrieval, and generation). A RAG application must include query-time retrieval from Vector Search and LLM response generation. You also cannot meaningfully evaluate a RAG QA system without running the retrieval+generation pipeline on test questions.

Incorrect sequence: it starts with user queries before ingestion and indexing. In a real RAG system, the knowledge base must be ingested, embedded, and indexed ahead of time so retrieval can work at inference. While documents can be updated incrementally, the baseline pipeline requires the vector index to exist before users can query and retrieve relevant context.

문제 분석

Core concept: This question tests the end-to-end lifecycle of a Retrieval-Augmented Generation (RAG) application on Databricks: preparing a knowledge corpus, indexing it in Databricks Vector Search, performing retrieval at query time to ground an LLM’s response, then evaluating and deploying the final chain (retriever + prompt + model) using Databricks Model Serving. Why the answer is correct: A correct RAG flow has two phases: (1) offline/ingestion: ingest documents, chunk/clean, embed, and index into a vector store; and (2) online/inference: user query → retrieve relevant chunks → provide them as context to the LLM → generate an answer. Evaluation should occur after you have a working end-to-end pipeline (retrieval + generation), because you need to measure answer quality (groundedness, relevance, correctness) and retrieval quality (recall/precision) on representative questions. Option B places evaluation after the LLM generates responses, which matches how RAG systems are evaluated in practice. Key features / best practices (Databricks-specific): - Ingestion: use Auto Loader / Delta for scalable ingestion; chunk documents appropriately for embedding. - Indexing: create embeddings (e.g., via foundation model endpoints or embedding models) and store them in a Delta table; build a Databricks Vector Search index (managed or self-managed) for similarity search. - Retrieval: at query time, embed the user question, retrieve top-k chunks, optionally apply filters (metadata, regulation version), and pass retrieved text into the prompt. - Evaluation: use offline evaluation sets and metrics (answer correctness, faithfulness/groundedness, context relevance). Track results with MLflow to compare prompt/model/index changes. - Deployment: package the RAG chain as a model (often via MLflow) and deploy with Databricks Model Serving for low-latency, scalable endpoints. Common misconceptions: A common trap is placing evaluation before generation (you can’t evaluate answer quality without answers) or omitting the query-time retrieval step. Another confusion is thinking “LLM retrieves documents” directly; in RAG, retrieval is performed by the application using Vector Search, then the LLM is conditioned on retrieved context. Exam tips: Look for the canonical ordering: ingest/index first; query-time retrieval then generation; evaluation after end-to-end behavior exists; deploy last. If evaluation appears before generation, it’s usually incorrect for RAG application evaluation.

A Generative AI Engineer just deployed an LLM application at a digital marketing company that assists with answering customer service inquiries. Which metric should they monitor for their customer service LLM application in production?

This is the best answer because it is a true production metric that measures live application throughput. In a customer service setting, monitoring how many inquiries are processed over time helps determine whether the system can keep up with demand and meet service expectations. Changes in throughput can reveal operational problems such as slower model inference, retrieval delays, rate limiting, or infrastructure saturation. It is also useful for capacity planning, autoscaling decisions, and identifying regressions after deployment changes.

Energy usage per query can be relevant for sustainability reporting or cost optimization, but it is not the most essential metric for monitoring a customer service LLM in production. Operational health typically prioritizes user-facing performance (latency, throughput, error rates) and quality/safety outcomes. Energy is also harder to measure accurately per request in shared infrastructure.

Perplexity is an offline training/evaluation metric that measures how well a language model predicts tokens on a dataset. It does not reliably correlate with task success for customer service, especially in RAG or tool-using applications. Production monitoring should focus on runtime behavior and user outcomes rather than training-time perplexity scores.

HuggingFace leaderboard values are generic benchmark results for base models and are useful during model selection, not production monitoring. They do not capture your specific prompts, retrieval data, guardrails, or customer service domain constraints. Production issues (latency spikes, failures, hallucinations) won’t be detected by referencing static leaderboard scores.

문제 분석

Core concept: This question tests production monitoring for an LLM application (LLMOps). In Databricks-oriented GenAI deployments, monitoring focuses on both model quality (e.g., relevance, safety) and system/service health (e.g., latency, throughput, errors, cost). For a customer service assistant, operational metrics that reflect user experience and capacity are essential. Why the answer is correct: The “number of customer inquiries processed per unit of time” is a throughput metric. In production, throughput directly indicates whether the application is meeting demand, scaling appropriately, and maintaining service levels during traffic spikes. It also helps detect regressions (e.g., a new prompt or retrieval configuration increases token usage/latency, reducing throughput) and supports capacity planning. For customer service, the business outcome is timely responses; throughput is a primary indicator of whether the system can handle incoming volume. Key features / best practices: In practice, you monitor throughput alongside latency (p50/p95), error rates, timeouts, queue depth, and cost per request. On Databricks, these are commonly captured via model serving endpoint metrics, application logs, and tracing/observability integrations. You also pair operational metrics with quality/safety monitoring (e.g., user feedback, escalation rate, hallucination indicators, toxicity/PII flags), but the question asks for a single metric to monitor—throughput is the most directly applicable among the options. Common misconceptions: Perplexity and leaderboard scores are offline training/benchmark signals and do not reflect real production performance for a specific customer service workflow. Energy usage per query can matter for sustainability/cost, but it’s rarely the primary production KPI for customer service responsiveness and is not typically the first-line metric for LLM app health. Exam tips: When asked about “monitor in production,” prioritize runtime service metrics (throughput, latency, errors) and application-level KPIs (task success, user satisfaction). Deprioritize offline training metrics and generic benchmark/leaderboard numbers unless the question explicitly asks about model selection or training evaluation.

A Generative AI Engineer is building a Generative AI system that suggests the best matched employee team member to newly scoped projects. The team member is selected from a very large team. The match should be based upon project date availability and how well their employee profile matches the project scope. Both the employee profile and project scope are unstructured text. How should the Generative Al Engineer architect their system?

This inverts the retrieval problem: it embeds project scopes and queries with employee profiles. The task is to find employees for a new project, so the natural index is employee profiles and the query is the project scope. Also, availability filtering is not integrated into the retrieval step here, and embedding “all project scopes” is unnecessary when projects are transient compared to a stable employee corpus.

Keyword extraction plus keyword matching is brittle for unstructured text. It misses semantic similarity (synonyms, related concepts, implicit requirements) and tends to underperform on real-world profiles and scopes. It also scales poorly if you iterate through many profiles. Modern Databricks GenAI design favors embeddings/vector search for semantic retrieval rather than LLM-extracted keywords and string matching.

A custom similarity tool that scores each employee profile against the project scope can work conceptually, but iterating through a very large team is inefficient and expensive. It ignores the main advantage of vector databases: approximate nearest neighbor search to avoid brute-force comparisons. This option lacks a vector index/retrieval step, making it a poor architecture for scale and latency.

This is the recommended architecture: use a deterministic tool to filter by date availability, then perform semantic retrieval by embedding employee profiles into a vector store and querying with the project scope. Using filtering ensures only available employees are considered during retrieval, which improves relevance and efficiency. This aligns with Databricks Vector Search best practices for large-scale unstructured matching with structured constraints.

문제 분석

Core concept: This question tests retrieval-augmented matching for unstructured text at scale, combined with structured constraints (date availability). In Databricks GenAI application design, the standard pattern is: (1) apply hard filters/constraints using deterministic tools (availability), then (2) use embeddings + vector search to semantically retrieve the best matches from a large corpus (employee profiles), optionally with metadata filtering. Why D is correct: Employee profiles and project scopes are unstructured text, so semantic similarity via embeddings is the most robust way to match “fit” beyond exact keywords. Because the team is very large, iterating through all employees and scoring each one is inefficient and does not leverage vector indexes. The system should first call a tool that returns available employees for the project dates (structured query against a schedule/HR system). Then, embed employee profiles into a vector store (Databricks Vector Search) and query using the project scope embedding. Critically, use filtering (metadata filters or pre-filtered candidate IDs) so retrieval only considers available employees. This yields scalable, low-latency top-k candidates that can then be optionally re-ranked or summarized by an LLM. Key features / best practices: - Use Databricks Vector Search with an embedding model (e.g., foundation model embeddings) to index employee profile chunks. - Store metadata alongside vectors (employee_id, role, skills, location, and availability flags or a join key) to enable filtered retrieval. - Apply “hard constraints first” (availability) to reduce the search space, then semantic retrieval for relevance. - Optionally add a second-stage re-ranker (LLM or cross-encoder) on the top-k results for higher precision, but the core architecture remains filtered vector retrieval. Common misconceptions: - Keyword extraction/matching (B) seems simpler but fails on synonyms, nuanced scope descriptions, and varied writing styles. - Brute-force similarity scoring over all employees (C) is conceptually valid but not scalable for a very large team. - Embedding project scopes and querying with employee profiles (A) reverses the typical direction and doesn’t naturally support “find best employee for a project”; it also complicates filtering by availability. Exam tips: When you see “very large corpus” + “unstructured text matching,” default to embeddings + vector search. When you also see “date availability” or other structured constraints, use tools/filters to enforce constraints, then perform vector retrieval with metadata filtering to get the best candidates efficiently.

이동 중에도 모든 문제를 풀고 싶으신가요?

Cloud Pass를 다운로드하세요 — 모의고사, 학습 진도 추적 등을 제공합니다.

A Generative AI Engineer has a provisioned throughput model serving endpoint as part of a RAG application and would like to monitor the serving endpoint’s incoming requests and outgoing responses. The current approach is to include a micro-service in between the endpoint and the user interface to write logs to a remote server. Which Databricks feature should they use instead which will perform the same task?

Vector Search is used to index embeddings and retrieve semantically similar content for the retrieval step of a RAG workflow. It helps supply relevant context to the model, but it does not log serving endpoint requests or responses. There is no built-in request/response observability function in Vector Search that replaces a logging microservice. Therefore, it is not the correct feature for monitoring endpoint traffic.

Lakeview is Databricks' dashboarding and visualization capability for presenting data and metrics. It can be used to build dashboards on top of inference logs after those logs have been collected, but it does not itself capture model serving traffic. The question asks for the feature that performs the logging task, not the feature that visualizes the results. As a result, Lakeview is not the right answer.

DBSQL is the SQL analytics layer used to query and analyze data stored in Databricks. It is useful for examining inference logs once they have been written to tables, but it does not natively intercept or persist requests and responses from model serving endpoints. In other words, DBSQL is an analysis tool rather than a traffic-capture mechanism. That makes it unsuitable as a replacement for the described microservice.

Inference Tables are the Databricks-native feature for automatically capturing model serving endpoint traffic, including incoming requests, outgoing responses, and associated metadata. They provide a structured, queryable record of inference activity without requiring a custom proxy or microservice to intercept traffic. For a provisioned throughput endpoint in a RAG application, this is exactly the built-in mechanism used for observability, debugging, auditing, and offline evaluation. Because the question asks for a feature that performs the same request/response logging task, Inference Tables are the best fit.

문제 분석

Core Concept: This question tests Databricks monitoring/observability for Model Serving endpoints in GenAI/RAG systems—specifically capturing request/response payloads for later analysis, debugging, and evaluation. Why the Answer is Correct: Inference Tables are the Databricks feature designed to log and persist model serving traffic (incoming requests and outgoing responses) from a serving endpoint. For a provisioned throughput endpoint used in a RAG application, you often need an auditable record of prompts, retrieved context (if included), model outputs, metadata (timestamps, latency, status), and potentially user/session identifiers (when appropriate). Inference Tables provide a structured, queryable Delta table-backed mechanism to store this inference data so you can monitor behavior over time, investigate incidents, and run offline evaluations (quality, safety, drift-like changes in outputs). Key Features / Best Practices: - Centralized logging of inference inputs/outputs from Model Serving endpoints into managed tables. - Enables downstream monitoring workflows: dashboards, alerting, error analysis, and evaluation pipelines. - Supports governance and compliance patterns by persisting inference traces in a controlled data plane (with access controls) rather than relying only on transient logs. - Common practice: pair Inference Tables with evaluation tooling (e.g., offline scoring, human review sampling) to continuously improve RAG prompts, retrieval settings, and model versions. Common Misconceptions: - Vector Search is essential for retrieval in RAG, but it does not monitor serving traffic. - AutoML is for model training/selection, not inference observability. - Feature Serving serves features for ML models; it is not the mechanism for capturing LLM endpoint request/response logs. Exam Tips: When you see “monitor incoming requests and outgoing responses” for a Databricks Model Serving endpoint, think “Inference Tables” (traffic logging to Delta tables). If the question instead focuses on retrieval quality/latency, you might consider Vector Search metrics, but request/response capture is the key phrase pointing to Inference Tables.

A Generative Al Engineer is building a system which will answer questions on latest stock news articles. Which will NOT help with ensuring the outputs are relevant to financial news?

A finance-specific guardrail framework can improve relevance by constraining outputs to acceptable financial-domain behavior and policies. For example, it can require the model to stay within finance-related topics, avoid unsupported claims, and enforce use of approved financial sources or response patterns. Although guardrails are often associated with safety and compliance, domain-tailored guardrails also help keep responses aligned to the intended subject matter. That makes this a helpful control rather than the best answer.

Increasing compute can support more sophisticated relevance-improving techniques, even if the option mentions speed. Additional compute may allow better retrieval pipelines, stronger reranking models, larger context windows, or more frequent refresh of the latest stock news corpus. While compute alone does not guarantee relevance, it can enable systems that perform deeper relevance analysis and process more context. Because it can indirectly help relevance, it is not the clearest choice for what will NOT help.

A profanity filter is designed to detect and block offensive or inappropriate language. That function improves safety and user experience, but it does not help the system determine whether an answer is grounded in current financial news or relevant to a stock-related question. A response can be completely profanity-free and still be off-topic, hallucinated, or unrelated to finance. Therefore, this option does not help ensure relevance to financial news.

Manual review is a direct quality-control mechanism that can catch irrelevant, misleading, or non-financial outputs before they reach users. In a high-stakes domain like finance, human reviewers can verify that answers are grounded in the latest stock news and correct problematic responses. This clearly improves the relevance and reliability of outputs, even though it may reduce scalability. As a result, it is not the correct answer.

문제 분석

Core concept: The question asks which control will NOT help ensure that a stock-news question answering system produces outputs relevant to financial news. Relevance in this context is primarily improved by grounding the model in current financial articles, applying domain-specific constraints, and using human or automated quality checks to catch off-topic responses. Why correct: A profanity filter screens for offensive language, which is a safety and moderation mechanism rather than a relevance mechanism. It may improve appropriateness of tone, but it does not make the answer more likely to be about stock news or aligned to financial content. Key features: Controls that help relevance include domain-specific guardrails, retrieval over fresh financial sources, metadata filtering, reranking, and manual review. These mechanisms either constrain the model to finance-related content or detect and correct irrelevant outputs before users see them. Common misconceptions: It is easy to group all guardrails together and assume they all improve relevance equally. In reality, some guardrails target safety, toxicity, or compliance rather than topical alignment, and profanity filtering is a classic example of a safety-only control. Exam tips: When asked about relevance, focus on mechanisms that improve topical grounding, domain alignment, or quality review. Eliminate options that only address tone, toxicity, or general moderation unless the question is specifically about safety rather than relevance.

A Generative AI Engineer has been asked to build an LLM-based question-answering application. The application should take into account new documents that are frequently published. The engineer wants to build this application with the least cost and least development effort and have it operate at the lowest cost possible. Which combination of chaining components and configuration meets these requirements?

Correct. This is the classic RAG pipeline: a retriever pulls relevant passages from an updatable corpus (often via vector search), inserts them into a prompt, and the LLM answers using that context. It minimizes development effort (standard components) and cost (no repeated training), while staying current by refreshing the index as new documents arrive.

Incorrect. Frequently retraining or fine-tuning an LLM to incorporate new documents is expensive, slow, and operationally heavy. It also risks catastrophic forgetting and requires repeated evaluation. For rapidly changing knowledge, best practice is to keep the knowledge external and use retrieval at inference time (RAG) rather than continual model updates.

Incorrect. Prompt engineering plus an LLM alone cannot reliably answer questions about newly published documents if that information is not in the model’s training data. Without retrieval (or another external knowledge mechanism), the model will guess or hallucinate. This may be low effort initially but fails the “most up-to-date answers” requirement.

Incorrect for the stated constraints. Adding an agent and a fine-tuned LLM increases complexity, cost, and maintenance. Agents are valuable when the system must choose among tools, perform multi-step reasoning, or execute actions. For straightforward document Q&A with frequently updated content, a simple retriever + prompt + LLM is the lowest-cost, lowest-effort approach.

문제 분석

Core concept: This question tests the recommended architecture for keeping an LLM-based Q&A app up to date with frequently changing documents at the lowest cost and development effort. The key pattern is Retrieval-Augmented Generation (RAG): use a retriever over an external knowledge store (often a vector index) and inject retrieved context into the prompt sent to an LLM. Why the answer is correct: Option A describes the standard RAG chain: (1) user question, (2) retriever fetches relevant passages from the latest document corpus, (3) those passages are inserted into a prompt template, and (4) the LLM generates an answer grounded in that context. This meets the “frequently published documents” requirement because you update the index/knowledge store as documents arrive, without retraining or fine-tuning the LLM. It also minimizes cost: inference-time retrieval plus prompting is typically far cheaper than repeated fine-tuning, and it reduces engineering effort because it uses well-established components (prompt template + retriever + LLM) supported by common frameworks and Databricks patterns. Key features / best practices: - Keep documents in a managed store (e.g., Delta) and build embeddings + a vector search index; refresh incrementally as new docs land. - Use chunking, metadata filters, and top-k retrieval to control context size and token cost. - Grounding instructions in the prompt (cite sources, answer only from context) improves factuality. - This design separates “knowledge updates” (index refresh) from “model updates” (rare), which is operationally efficient. Common misconceptions: A frequent mistake is assuming the LLM must be retrained whenever documents change (Option B). Another is believing prompt engineering alone is sufficient (Option C), which fails when the model lacks the new information. Option D adds an agent and fine-tuning, increasing complexity and cost; agents are useful for tool orchestration, but not required for basic document Q&A. Exam tips: When you see “new documents frequently published” and “least cost/effort,” default to RAG (retriever + prompt + LLM). Fine-tuning is for changing behavior/style or domain adaptation, not for continuously updating factual knowledge. Agents are for multi-step tool use, not the minimal solution for document-grounded Q&A.

A Generative AI Engineer I using the code below to test setting up a vector store:

from databricks.vector_search.client import VectorSearchClient

vsc = VectorSearchClient()

vsc.create_endpoint(

name="vector_search_test",

endpoint_type="STANDARD"

)

Assuming they intend to use Databricks managed embeddings with the default embedding model, what should be the next logical function call?

vsc.get_index() retrieves a handle/metadata for an index that already exists. It’s useful after creation (or when reusing an existing index) to perform operations like querying. It is not the next logical step immediately after creating a new endpoint when the goal is to set up a new vector store, because no index has been created yet.

vsc.create_delta_sync_index() is the correct next step for Databricks-managed embeddings with the default embedding model. A Delta Sync index is built from a Delta table and Databricks can automatically compute embeddings from a text column and keep the index synchronized with table updates. This is the most common managed pattern for RAG on Databricks.

`vsc.create_direct_access_index()` is not the best answer here because it represents a different ingestion model. With direct access, you create the index and then write records directly into it, which is more appropriate when your application manages ingestion explicitly rather than syncing from a Delta table. The question asks for the next logical call when setting up a managed vector store, and the standard Databricks workflow for that is a Delta Sync index. While direct access can support managed embeddings in some configurations, it is not the most natural choice implied by this prompt.

vsc.similarity_search() is a query-time operation used after an index exists and has data/embeddings available. Immediately after creating only the endpoint, there is nothing to search yet. Similarity search is part of application development/inference, not the setup step for creating the managed vector store.

문제 분석

Core Concept: This question tests the normal Databricks Vector Search setup sequence after creating an endpoint. Once an endpoint exists, the next step is typically to create an index on that endpoint. If the intended pattern is a managed vector store using Databricks-managed embeddings and the default embedding model, the common next call is to create a Delta Sync index backed by a Delta table. Why Correct: `vsc.create_delta_sync_index()` is the standard follow-up when you want Databricks to manage indexing from table data and handle embedding generation from text columns. In that workflow, you specify the source Delta table, primary key, and embedding source column, and Databricks builds and maintains the index. This fits the phrase “setting up a vector store” better than querying or retrieving an existing index. Key Features: A Delta Sync index continuously reflects data from a Delta table and is commonly used for RAG pipelines where documents live in Lakehouse storage. It supports managed embedding generation and synchronization, reducing operational overhead. By contrast, direct access indexes are more manual because you write records directly into the index rather than syncing from Delta. Common Misconceptions: `create_direct_access_index()` is not inherently incompatible with managed embeddings, so it is not wrong for that reason alone. However, it is a different ingestion pattern intended for direct writes to the index rather than the usual table-backed sync flow implied by “set up a vector store” after endpoint creation. `get_index()` and `similarity_search()` happen later in the lifecycle, after an index already exists. Exam Tips: For Databricks Vector Search questions, first identify the lifecycle stage: endpoint creation, index creation, ingestion/sync, or querying. After `create_endpoint()`, the next setup action is usually one of the index creation methods. If the scenario implies a Delta-table-backed managed store, prefer `create_delta_sync_index()`; if it implies direct record insertion, consider `create_direct_access_index()`.

A Generative AI Engineer wants to build an LLM-based solution to help a restaurant improve its online customer experience with bookings by automatically handling common customer inquiries. The goal of the solution is to minimize escalations to human intervention and phone calls while maintaining a personalized interaction. To design the solution, the Generative AI Engineer needs to define the input data to the LLM and the task it should perform. Which input/output pair will support their goal?

Grouping chat logs by users and summarizing interactions is primarily an analytics or support-handoff task. It can help staff understand a customer’s history, but it does not directly drive an automated booking experience in the moment. Summaries don’t capture required structured booking fields (date/time/party size) and won’t inherently reduce escalations or phone calls as effectively as guided self-serve flows.

Using online chat logs as input supports real-time intent detection and personalized context. Outputting buttons/choices for booking details is a guided, structured interaction pattern that reduces ambiguity and keeps users on a self-service path. This design is well-suited for LLM apps that need high task completion (book/modify) with fewer failures, fewer clarifying turns, and fewer escalations.

Customer reviews as input with sentiment classification as output is valuable for measuring satisfaction and improving service, but it does not address the operational goal of handling booking inquiries automatically. It’s not a conversational workflow and won’t help capture booking parameters or complete reservations, so it won’t meaningfully reduce phone calls or human intervention for bookings.

Online chat logs with cancellation options as output addresses only one narrow scenario (cancellations). The problem statement is broader: “common customer inquiries” and improving booking experience end-to-end. A cancellation-only output doesn’t support booking creation/modification, answering FAQs, or collecting required details, so it won’t minimize escalations across typical booking interactions.

문제 분석

Core concept: This question tests “designing the LLM task and I/O” for an LLM-based assistant. In Databricks GenAI application design, you choose inputs that capture user intent and context (e.g., chat messages, reservation policy/availability, menu, hours) and outputs that either (a) directly answer, or (b) drive the next best action via structured outputs/tool calls. The goal here is to reduce escalations and phone calls while keeping interactions personalized—this is classic conversational booking automation. Why the answer is correct: Option B (Input: online chat logs; Output: buttons that represent choices for booking details) best supports an automated booking flow. Using chat logs (the user’s current conversation) as input lets the model infer intent (book, modify, cancel, ask hours, party size, dietary needs). Producing “buttons/choices” is a form of structured, guided interaction that reduces ambiguity and prevents the model from asking open-ended questions repeatedly. It also minimizes errors (wrong date/time/party size) and keeps the user in a self-serve path, which directly reduces escalations and phone calls. Key features / best practices: In Databricks, this aligns with building an agentic or RAG-enabled chat app where the LLM produces structured outputs (e.g., JSON schema) that the UI renders as quick replies/buttons, or triggers tool/function calls (e.g., check availability, create reservation). Guardrails are easier with constrained outputs: you can validate fields, enforce required booking slots, and keep the conversation on-policy. Personalization can still be maintained by using the chat context plus customer profile (if available) while presenting clear next-step options. Common misconceptions: Summarizing chats (A) is useful for analytics or agent handoff, but it doesn’t directly automate booking interactions in real time. Sentiment classification (C) helps reputation management, not booking automation. Cancellation options only (D) is too narrow and doesn’t cover the broader set of common inquiries. Exam tips: When the prompt asks to “define the input data to the LLM and the task it should perform,” pick I/O that enables the end-to-end user journey. For automation and reduced escalation, prefer structured outputs (choices, forms, tool calls) over offline analytics tasks (summaries, clustering, sentiment).

이동 중에도 모든 문제를 풀고 싶으신가요?

Cloud Pass를 다운로드하세요 — 모의고사, 학습 진도 추적 등을 제공합니다.

What is an effective method to preprocess prompts using custom code before sending them to an LLM?

Incorrect. Directly modifying an LLM’s internal architecture is not a practical or supported approach for most enterprise use cases, especially when using hosted foundation models (e.g., via Databricks Model Serving or external APIs). Preprocessing is typically done outside the model through prompt templates, retrieval pipelines, or application code, not by changing the LLM weights/architecture.

Incorrect. Custom preprocessing is common and often necessary (templating, safety instructions, context injection, normalization). LLMs are designed to be steered via prompts; they do not require training examples of your exact preprocessed format to work. The key is to keep preprocessing consistent, deterministic where possible, and evaluated for quality and safety.

Incorrect. Postprocessing can help (format validation, JSON parsing, guardrails), but it does not replace preprocessing. Many outcomes depend on how the prompt is constructed (system instructions, retrieved context, constraints). Effective GenAI systems typically use both preprocessing (to guide generation) and postprocessing (to validate/shape outputs).

Correct. An MLflow PyFunc model can wrap custom prompt preprocessing logic and the LLM call into a single deployable unit. This enables versioning, reproducibility, and deployment via Databricks Model Serving or batch inference. Separating preprocessing into its own function within the PyFunc improves testability and maintainability while aligning with Databricks best practices for operationalizing GenAI pipelines.

문제 분석

Core Concept: This question tests how to implement prompt preprocessing in a production-ready way on Databricks, using MLflow model packaging patterns. Prompt preprocessing includes steps like templating, injecting retrieved context, enforcing formatting, redacting PII, adding system instructions, or normalizing user inputs before calling an LLM. Why the Answer is Correct: Writing an MLflow PyFunc model with a dedicated prompt-processing function is an effective method because it encapsulates custom preprocessing logic and the LLM invocation behind a single, versioned model interface. In Databricks, MLflow is the standard mechanism for packaging, registering, and deploying models (including GenAI chains). A PyFunc wrapper can accept raw inputs, transform them into the final prompt (or messages), call the LLM endpoint, and return results—making the preprocessing reproducible, testable, and deployable across batch and real-time serving. Key Features / Best Practices: - Encapsulation: Preprocessing and inference live together, reducing drift between notebooks and serving. - Versioning & governance: Register the PyFunc model in MLflow Model Registry/Unity Catalog for lineage, permissions, and controlled promotion. - Portability: The same model can run in batch jobs, workflows, or Model Serving. - Maintainability: Separate functions (e.g., preprocess(), predict()) improve readability and unit testing. - Observability: MLflow can log parameters, artifacts, and evaluation results; serving logs can capture inputs/outputs (subject to governance). Common Misconceptions: Some assume you must modify the LLM itself (not feasible with hosted foundation models) or that preprocessing is harmful because the model “wasn’t trained on it.” In reality, prompt engineering and structured templates are standard practice. Others think postprocessing alone is sufficient; while useful, it doesn’t replace the need to shape inputs for safety, grounding, and consistency. Exam Tips: For Databricks GenAI deployment questions, look for answers that use MLflow (PyFunc, model registry, serving) to operationalize custom logic. If the question asks about adding custom code “before sending to an LLM,” the most Databricks-aligned approach is wrapping preprocessing + LLM call in an MLflow-deployable artifact rather than altering the model internals or relying only on postprocessing.

A Generative AI Engineer is developing an LLM application that users can use to generate personalized birthday poems based on their names. Which technique would be most effective in safeguarding the application, given the potential for malicious user inputs?

Correct. A safety filter (input moderation and/or prompt-injection detection) that blocks harmful content and triggers a refusal response directly mitigates the risk from malicious user prompts. It prevents unsafe requests from reaching the model and reduces the chance of generating disallowed content. This aligns with best-practice LLM guardrails: pre-processing, policy enforcement, and safe refusal behavior.

Incorrect. Reducing interaction time is a rate-limiting or UX constraint, not a content-safety control. Malicious prompts can be issued immediately, so time limits do not reliably prevent prompt injection, toxic content, or attempts to exfiltrate sensitive information. Rate limiting can be a supporting control, but it is not the most effective safeguard for harmful inputs.

Incorrect. Telling the user the input is malicious but continuing the conversation is risky because it still allows the model to generate harmful or policy-violating content. Attackers can iterate and refine prompts, and the model may comply despite warnings. Best practice is to refuse and stop or redirect to safe alternatives, not to continue engaging with malicious requests.

Incorrect. Increasing compute improves latency/throughput but does not improve safety. Faster processing can even amplify harm by enabling higher-volume abuse. Safety requires governance and guardrails (moderation, policy checks, least-privilege tool access, monitoring), not more hardware resources.

문제 분석

Core Concept: This question tests LLM application safety and governance controls for untrusted user input (prompt injection, toxic content, policy violations). In Databricks GenAI patterns, this maps to implementing guardrails: input/output moderation, policy enforcement, and safe refusal behavior. Why the Answer is Correct: A safety filter that detects harmful inputs and returns a refusal is the most effective safeguard among the options because it directly mitigates the primary risk: malicious prompts that try to elicit disallowed content, exfiltrate secrets, or override system instructions. A well-designed filter can block or sanitize inputs before they reach the model and can also enforce consistent refusal messaging. This reduces the chance of the model generating harmful content and limits exposure to prompt-injection attempts. Key Features / Best Practices: Effective safeguards typically include (1) input moderation (toxicity, self-harm, hate, sexual content, violence, illegal instructions), (2) prompt-injection detection (attempts to override system prompts, request secrets, or manipulate tools), and (3) output moderation to catch unsafe generations that slip through. In Databricks deployments, these controls are commonly implemented as pre/post-processing steps around Model Serving endpoints, with logging and monitoring for abuse patterns. A strong policy-driven refusal response (“I can’t help with that”) is preferred over engaging with malicious content. Defense-in-depth is the goal: filters + least-privilege access to tools/data + secrets isolation + monitoring. Common Misconceptions: People sometimes think performance controls (time limits, more compute) improve safety, but they don’t address the content risk. Others assume the model can “handle it” by warning the user; however, continuing the conversation can still produce harmful outputs and can be exploited by iterative prompt attacks. Exam Tips: When you see “malicious user inputs” in an LLM app question, look for guardrails: moderation filters, policy enforcement, safe refusal, and monitoring. Choose controls that prevent or block unsafe content rather than merely speeding up or limiting interaction time. Also remember that governance-oriented answers often involve enforcing usage policies and reducing risk at the boundary of the application (before/after the LLM call).

Which indicator should be considered to evaluate the safety of the LLM outputs when qualitatively assessing LLM responses for a translation use case?

The ability to generate responses in code is not a safety indicator for a translation use case. Code generation is a capability metric relevant to developer or programming assistants, not to whether a translation is faithful, non-harmful, or policy-compliant. A model could generate code well and still produce unsafe or inaccurate translations.

Similarity to the previous language is vague and can be misleading. Translation quality is about semantic equivalence and intent preservation, not superficial similarity to the source language. A safe translation can differ significantly in wording or structure while remaining accurate. This option does not directly capture hallucinations, harmful additions, or meaning drift.

Latency and output length are important for user experience and cost/performance monitoring, but they do not measure safety. A fast response can be unsafe, and a slow response can be safe. Similarly, longer outputs might correlate with verbosity or hallucination risk, but length alone is not a reliable qualitative safety indicator for translation.

Accuracy and relevance best align with qualitative safety assessment for translation. Accuracy checks that meaning, tone, and intent are preserved (reducing risk of harmful mistranslation), while relevance checks that the model does not add extra content, opinions, or instructions that were not in the source. These are core signals for detecting hallucinations and unsafe deviations.

문제 분석

Core Concept: This question tests qualitative evaluation of LLM outputs for a translation use case, specifically the “safety” aspect. In practice (and in Databricks GenAI evaluation workflows), safety is assessed by reviewing whether outputs are appropriate, non-harmful, and aligned with the user intent and policy constraints. For translation, safety is tightly coupled with whether the model preserves meaning without introducing harmful, biased, or irrelevant content. Why the Answer is Correct: Option D (accuracy and relevance) is the best indicator among the choices for evaluating safety in translation. A safe translation should be faithful to the source (accuracy) and should not add extraneous content (relevance). Many safety failures in translation manifest as hallucinated additions, altered intent, or mistranslations that introduce offensive language, sensitive personal data, or instructions that were not present in the original. Therefore, checking accuracy and relevance is a primary qualitative signal that the model is not fabricating or drifting into unsafe content. Key Features / Best Practices: In Databricks-style evaluation, you typically combine human review and automated metrics. For translation, you might use reference-based metrics (e.g., BLEU/COMET) and LLM-as-a-judge rubrics that explicitly score faithfulness, meaning preservation, and harmful content. You also apply guardrails (prompting constraints, content filters, and policy checks) and monitor for regressions over time. Common Misconceptions: “Similarity to the previous language” (B) sounds like it checks correctness, but it’s ambiguous and not a standard safety indicator; translation should be similar in meaning, not necessarily in surface form. Latency/length (C) are operational metrics, not safety. Code generation ability (A) is unrelated to translation safety. Exam Tips: When asked about “safety” in qualitative assessment, look for options tied to correctness/faithfulness and avoidance of harmful or irrelevant content. For translation, prioritize meaning preservation and non-hallucination over performance metrics like latency.

A Generative AI Engineer is developing a patient-facing healthcare-focused chatbot. If the patient’s question is not a medical emergency, the chatbot should solicit more information from the patient to pass to the doctor’s office and suggest a few relevant pre-approved medical articles for reading. If the patient’s question is urgent, direct the patient to calling their local emergency services. Given the following user input: “I have been experiencing severe headaches and dizziness for the past two days.” Which response is most appropriate for the chatbot to generate?

Incorrect. Suggesting articles is appropriate only when the question is clearly not urgent and after the chatbot has gathered enough context to provide relevant, pre-approved resources. With severe headache and dizziness, routing to reading materials risks delaying care and violates the prompt’s requirement to escalate urgent cases to emergency services.

Correct. The scenario describes severe headaches and dizziness lasting two days, which can be a red-flag combination. The chatbot’s specification explicitly states that urgent questions must be directed to calling local emergency services. This is the safest response and aligns with healthcare guardrails and risk-minimizing conversational design.

Incorrect. While empathetic language can be part of a good user experience, it does not meet the functional requirement for urgent cases. It provides no actionable safety guidance and could be interpreted as reassurance, which is risky in healthcare contexts and contrary to best practices for high-stakes domains.

Incorrect. Asking for more details is the correct behavior only for non-emergency situations where the chatbot is collecting information to pass to a doctor’s office. In a potentially urgent scenario, follow-up questions can delay escalation. The safer design is to route immediately to emergency services guidance.

문제 분석