Practice Test #2

50問と45分の制限時間で実際の試験をシミュレーションしましょう。AI検証済み解答と詳細な解説で学習できます。

AI搭載

3重AI検証済み解答&解説

すべての解答は3つの主要AIモデルで交差検証され、最高の精度を保証します。選択肢ごとの詳細な解説と深い問題分析を提供します。

練習問題

You have a transactional application that stores data in an Azure SQL managed instance. When should you implement a read-only database replica?

HOTSPOT - For each of the following statements, select Yes if the statement is true. Otherwise, select No. NOTE: Each correct selection is worth one point. Hot Area:

A Microsoft Power BI dashboard is associated with a single workspace.

A Microsoft Power BI dashboard can only display visualizations from a single dataset.

A Microsoft Power BI dashboard can display visualizations from a Microsoft Excel workbook.

HOTSPOT - To complete the sentence, select the appropriate option in the answer area. Hot Area:

A relational database must be used when ______

HOTSPOT - To complete the sentence, select the appropriate option in the answer area. Hot Area:

To configure an Azure Storage account to support both security at the folder level and atomic directory manipulation, ______

HOTSPOT - To complete the sentence, select the appropriate option in the answer area. Hot Area:

______ is an object associated with a table that sorts and stores the data rows in the table based on their key values.

外出先でもすべての問題を解きたいですか?

Cloud Passをダウンロード — 模擬試験、学習進捗の追跡などを提供します。

Your company has a reporting solution that has paginated reports. The reports query a dimensional model in a data warehouse. Which type of processing does the reporting solution use?

DRAG DROP - Match the terms to the appropriate descriptions. To answer, drag the appropriate term from the column on the left to its description on the right. Each term may be used once, more than once, or not at all. NOTE: Each correct match is worth one point. Select and Place:

A database object that holds data

A database object whose content is defined by a query

A database object that helps improve the speed of data retrieval

HOTSPOT - To complete the sentence, select the appropriate option in the answer area. Hot Area:

A key/value data store is optimized for ______.

HOTSPOT - For each of the following statements, select Yes if the statement is true. Otherwise, select No. NOTE: Each correct selection is worth one point. Hot Area:

The Azure Cosmos DB API is configured separately for each database in an Azure Cosmos DB account.

Partition keys are used in Azure Cosmos DB to optimize queries.

Items contained in the same Azure Cosmos DB logical partition can have different partition keys.

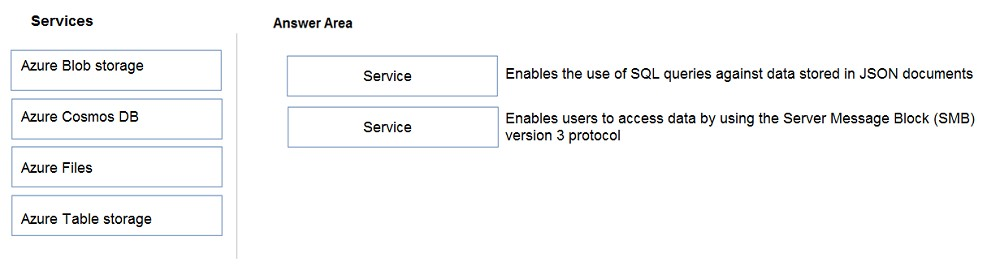

DRAG DROP - Match the datastore services to the appropriate descriptions. To answer, drag the appropriate service from the column on the left to its description on the right. Each service may be used once, more than once, or not at all. NOTE: Each correct match is worth one point. Select and Place:

Select the correct answer(s) in the image below.

アプリを入手