Practice Test #1

Simulate the real exam experience with 50 questions and a 100-minute time limit. Practice with AI-verified answers and detailed explanations.

AI-Powered

Triple AI-Verified Answers & Explanations

Every answer is cross-verified by 3 leading AI models to ensure maximum accuracy. Get detailed per-option explanations and in-depth question analysis.

Practice Questions

You have an on-premises server that contains a folder named D:\Folder1. You need to copy the contents of D:\Folder1 to the public container in an Azure Storage account named contosodata. Which command should you run?

You have an Azure Active Directory (Azure AD) tenant that contains 5,000 user accounts. You create a new user account named AdminUser1. You need to assign the User administrator administrative role to AdminUser1. What should you do from the user account properties?

You plan to automate the deployment of a virtual machine scale set that uses the Windows Server 2016 Datacenter image. You need to ensure that when the scale set virtual machines are provisioned, they have web server components installed. Which two actions should you perform? Each correct answer presents part of the solution. NOTE: Each correct selection is worth one point.

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution. After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen. You have a computer named Computer1 that has a point-to-site VPN connection to an Azure virtual network named VNet1. The point-to-site connection uses a self-signed certificate. From Azure, you download and install the VPN client configuration package on a computer named Computer2. You need to ensure that you can establish a point-to-site VPN connection to VNet1 from Computer2. Solution: You modify the Azure Active Directory (Azure AD) authentication policies. Does this meet the goal?

You plan to create an Azure virtual machine named VM1 that will be configured as shown in the following exhibit.

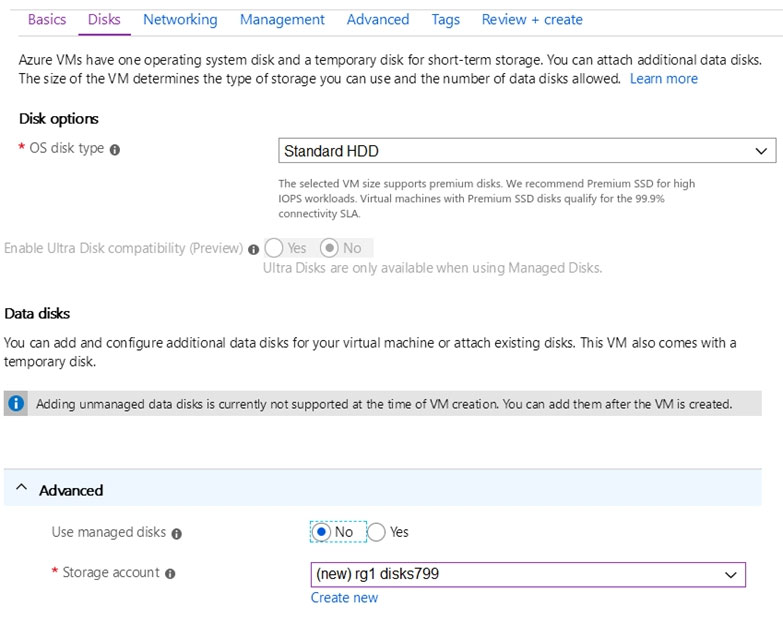

The planned disk configurations for VM1 are shown in the following exhibit.

You need to ensure that VM1 can be created in an Availability Zone. Which two settings should you modify? Each correct answer presents part of the solution. NOTE: Each correct selection is worth one point.

Select the correct answer(s) in the image below.

Select the subscription to manage deployed resources and costs. Use resource groups like folders to organize and manage all your resources. Subscription ______

Resource group ______

Virtual machine name ______

Region ______

Availability options ______

Image ______

Azure Spot instance ______

Want to practice all questions on the go?

Download Cloud Pass — includes practice tests, progress tracking & more.

You have an Azure subscription. You have 100 Azure virtual machines. You need to quickly identify underutilized virtual machines that can have their service tier changed to a less expensive offering. Which blade should you use?

You plan to deploy three Azure virtual machines named VM1, VM2, and VM3. The virtual machines will host a web app named App1. You need to ensure that at least two virtual machines are available if a single Azure datacenter becomes unavailable. What should you deploy?

HOTSPOT - You have an Azure subscription named Subscription1. Subscription1 contains the resources in the following table. Name Type RG1 Resource group RG2 Resource group VNet1 Virtual network VNet2 Virtual network VNet1 is in RG1. VNet2 is in RG2. There is no connectivity between VNet1 and VNet2. An administrator named Admin1 creates an Azure virtual machine named VM1 in RG1. VM1 uses a disk named Disk1 and connects to VNet1. Admin1 then installs a custom application in VM1. You need to move the custom application to VNet2. The solution must minimize administrative effort. Which two actions should you perform? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point. Hot Area:

First action: ______

Second action: ______

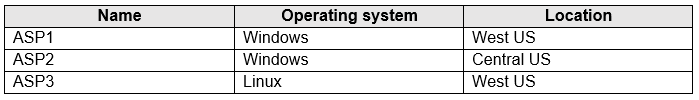

HOTSPOT - You have the App Service plans shown in the following table.

You plan to create the Azure web apps shown in the following table. Name | Runtime stack | Location WebApp1 | .NET Core 3.0 | West US WebApp2 | ASP.NET 4.7 | West US You need to identify which App Service plans can be used for the web apps. What should you identify? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point. Hot Area:

WebApp1: ______

WebApp2: ______

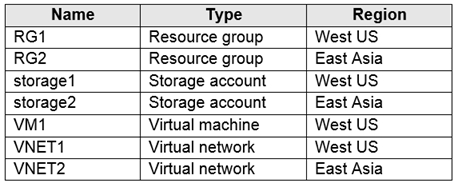

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution. After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen. You have an Azure subscription that contains the resources shown in the following table.

VM1 connects to VNET1. You need to connect VM1 to VNET2. Solution: You move VM1 to RG2, and then you add a new network interface to VM1. Does this meet the goal?

Other Practice Tests

Practice Test #2

Practice Test #3

Practice Test #4

Practice Test #5

Practice Test #6

Practice Test #7

Practice Test #8

Practice Test #9

Get the app